mirror of

https://github.com/huggingface/transformers.git

synced 2025-08-03 03:31:05 +06:00

translate model_sharing.md and llm_tutorial.md to chinese (#27283)

* translate model_sharing.md * translate llm_tutorial.md to chiense * update wrong translation * update _torctree.yml * update typos * update

This commit is contained in:

parent

f213d5dd8c

commit

e264745051

@ -21,8 +21,12 @@

|

||||

title: 使用🤗Accelerate进行分布式训练

|

||||

- local: peft

|

||||

title: 使用🤗 PEFT加载和训练adapters

|

||||

- local: model_sharing

|

||||

title: 分享您的模型

|

||||

- local: transformers_agents

|

||||

title: agents教程

|

||||

- local: llm_tutorial

|

||||

title: 使用LLMs进行生成

|

||||

title: 教程

|

||||

- sections:

|

||||

- local: fast_tokenizers

|

||||

|

||||

269

docs/source/zh/llm_tutorial.md

Normal file

269

docs/source/zh/llm_tutorial.md

Normal file

@ -0,0 +1,269 @@

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

|

||||

⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

|

||||

rendered properly in your Markdown viewer.

|

||||

|

||||

-->

|

||||

|

||||

|

||||

## 使用LLMs进行生成

|

||||

|

||||

[[open-in-colab]]

|

||||

|

||||

LLMs,即大语言模型,是文本生成背后的关键组成部分。简单来说,它们包含经过大规模预训练的transformer模型,用于根据给定的输入文本预测下一个词(或更准确地说,下一个`token`)。由于它们一次只预测一个`token`,因此除了调用模型之外,您需要执行更复杂的操作来生成新的句子——您需要进行自回归生成。

|

||||

|

||||

自回归生成是在给定一些初始输入,通过迭代调用模型及其自身的生成输出来生成文本的推理过程,。在🤗 Transformers中,这由[`~generation.GenerationMixin.generate`]方法处理,所有具有生成能力的模型都可以使用该方法。

|

||||

|

||||

本教程将向您展示如何:

|

||||

|

||||

* 使用LLM生成文本

|

||||

* 避免常见的陷阱

|

||||

* 帮助您充分利用LLM下一步指导

|

||||

|

||||

在开始之前,请确保已安装所有必要的库:

|

||||

|

||||

|

||||

```bash

|

||||

pip install transformers bitsandbytes>=0.39.0 -q

|

||||

```

|

||||

|

||||

|

||||

## 生成文本

|

||||

|

||||

一个用于[因果语言建模](tasks/language_modeling)训练的语言模型,将文本`tokens`序列作为输入,并返回下一个`token`的概率分布。

|

||||

|

||||

|

||||

<!-- [GIF 1 -- FWD PASS] -->

|

||||

<figure class="image table text-center m-0 w-full">

|

||||

<video

|

||||

style="max-width: 90%; margin: auto;"

|

||||

autoplay loop muted playsinline

|

||||

src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/assisted-generation/gif_1_1080p.mov"

|

||||

></video>

|

||||

<figcaption>"LLM的前向传递"</figcaption>

|

||||

</figure>

|

||||

|

||||

使用LLM进行自回归生成的一个关键方面是如何从这个概率分布中选择下一个`token`。这个步骤可以随意进行,只要最终得到下一个迭代的`token`。这意味着可以简单的从概率分布中选择最可能的`token`,也可以复杂的在对结果分布进行采样之前应用多种变换,这取决于你的需求。

|

||||

|

||||

<!-- [GIF 2 -- TEXT GENERATION] -->

|

||||

<figure class="image table text-center m-0 w-full">

|

||||

<video

|

||||

style="max-width: 90%; margin: auto;"

|

||||

autoplay loop muted playsinline

|

||||

src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/assisted-generation/gif_2_1080p.mov"

|

||||

></video>

|

||||

<figcaption>"自回归生成迭代地从概率分布中选择下一个token以生成文本"</figcaption>

|

||||

</figure>

|

||||

|

||||

上述过程是迭代重复的,直到达到某个停止条件。理想情况下,停止条件由模型决定,该模型应学会在何时输出一个结束序列(`EOS`)标记。如果不是这种情况,生成将在达到某个预定义的最大长度时停止。

|

||||

|

||||

正确设置`token`选择步骤和停止条件对于让你的模型按照预期的方式执行任务至关重要。这就是为什么我们为每个模型都有一个[~generation.GenerationConfig]文件,它包含一个效果不错的默认生成参数配置,并与您模型一起加载。

|

||||

|

||||

让我们谈谈代码!

|

||||

|

||||

<Tip>

|

||||

|

||||

如果您对基本的LLM使用感兴趣,我们高级的[`Pipeline`](pipeline_tutorial)接口是一个很好的起点。然而,LLMs通常需要像`quantization`和`token选择步骤的精细控制`等高级功能,这最好通过[`~generation.GenerationMixin.generate`]来完成。使用LLM进行自回归生成也是资源密集型的操作,应该在GPU上执行以获得足够的吞吐量。

|

||||

|

||||

</Tip>

|

||||

|

||||

首先,您需要加载模型。

|

||||

|

||||

```py

|

||||

>>> from transformers import AutoModelForCausalLM

|

||||

|

||||

>>> model = AutoModelForCausalLM.from_pretrained(

|

||||

... "mistralai/Mistral-7B-v0.1", device_map="auto", load_in_4bit=True

|

||||

... )

|

||||

```

|

||||

|

||||

您将会注意到在`from_pretrained`调用中的两个标志:

|

||||

|

||||

- `device_map`确保模型被移动到您的GPU(s)上

|

||||

- `load_in_4bit`应用[4位动态量化](main_classes/quantization)来极大地减少资源需求

|

||||

|

||||

还有其他方式来初始化一个模型,但这是一个开始使用LLM很好的起点。

|

||||

|

||||

接下来,你需要使用一个[tokenizer](tokenizer_summary)来预处理你的文本输入。

|

||||

|

||||

```py

|

||||

>>> from transformers import AutoTokenizer

|

||||

|

||||

>>> tokenizer = AutoTokenizer.from_pretrained("mistralai/Mistral-7B-v0.1", padding_side="left")

|

||||

>>> model_inputs = tokenizer(["A list of colors: red, blue"], return_tensors="pt").to("cuda")

|

||||

```

|

||||

|

||||

`model_inputs`变量保存着分词后的文本输入以及注意力掩码。尽管[`~generation.GenerationMixin.generate`]在未传递注意力掩码时会尽其所能推断出注意力掩码,但建议尽可能传递它以获得最佳结果。

|

||||

|

||||

在对输入进行分词后,可以调用[`~generation.GenerationMixin.generate`]方法来返回生成的`tokens`。生成的`tokens`应该在打印之前转换为文本。

|

||||

|

||||

```py

|

||||

>>> generated_ids = model.generate(**model_inputs)

|

||||

>>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

||||

'A list of colors: red, blue, green, yellow, orange, purple, pink,'

|

||||

```

|

||||

|

||||

最后,您不需要一次处理一个序列!您可以批量输入,这将在小延迟和低内存成本下显著提高吞吐量。您只需要确保正确地填充您的输入(详见下文)。

|

||||

|

||||

```py

|

||||

>>> tokenizer.pad_token = tokenizer.eos_token # Most LLMs don't have a pad token by default

|

||||

>>> model_inputs = tokenizer(

|

||||

... ["A list of colors: red, blue", "Portugal is"], return_tensors="pt", padding=True

|

||||

... ).to("cuda")

|

||||

>>> generated_ids = model.generate(**model_inputs)

|

||||

>>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True)

|

||||

['A list of colors: red, blue, green, yellow, orange, purple, pink,',

|

||||

'Portugal is a country in southwestern Europe, on the Iber']

|

||||

```

|

||||

|

||||

就是这样!在几行代码中,您就可以利用LLM的强大功能。

|

||||

|

||||

|

||||

## 常见陷阱

|

||||

|

||||

有许多[生成策略](generation_strategies),有时默认值可能不适合您的用例。如果您的输出与您期望的结果不匹配,我们已经创建了一个最常见的陷阱列表以及如何避免它们。

|

||||

|

||||

```py

|

||||

>>> from transformers import AutoModelForCausalLM, AutoTokenizer

|

||||

|

||||

>>> tokenizer = AutoTokenizer.from_pretrained("mistralai/Mistral-7B-v0.1")

|

||||

>>> tokenizer.pad_token = tokenizer.eos_token # Most LLMs don't have a pad token by default

|

||||

>>> model = AutoModelForCausalLM.from_pretrained(

|

||||

... "mistralai/Mistral-7B-v0.1", device_map="auto", load_in_4bit=True

|

||||

... )

|

||||

```

|

||||

|

||||

### 生成的输出太短/太长

|

||||

|

||||

如果在[`~generation.GenerationConfig`]文件中没有指定,`generate`默认返回20个tokens。我们强烈建议在您的`generate`调用中手动设置`max_new_tokens`以控制它可以返回的最大新tokens数量。请注意,LLMs(更准确地说,仅[解码器模型](https://huggingface.co/learn/nlp-course/chapter1/6?fw=pt))也将输入提示作为输出的一部分返回。

|

||||

|

||||

```py

|

||||

>>> model_inputs = tokenizer(["A sequence of numbers: 1, 2"], return_tensors="pt").to("cuda")

|

||||

|

||||

>>> # By default, the output will contain up to 20 tokens

|

||||

>>> generated_ids = model.generate(**model_inputs)

|

||||

>>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

||||

'A sequence of numbers: 1, 2, 3, 4, 5'

|

||||

|

||||

>>> # Setting `max_new_tokens` allows you to control the maximum length

|

||||

>>> generated_ids = model.generate(**model_inputs, max_new_tokens=50)

|

||||

>>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

||||

'A sequence of numbers: 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16,'

|

||||

```

|

||||

|

||||

### 错误的生成模式

|

||||

|

||||

默认情况下,除非在[`~generation.GenerationConfig`]文件中指定,否则`generate`会在每个迭代中选择最可能的token(贪婪解码)。对于您的任务,这可能是不理想的;像聊天机器人或写作文章这样的创造性任务受益于采样。另一方面,像音频转录或翻译这样的基于输入的任务受益于贪婪解码。通过将`do_sample=True`启用采样,您可以在这篇[博客文章](https://huggingface.co/blog/how-to-generate)中了解更多关于这个话题的信息。

|

||||

|

||||

```py

|

||||

>>> # Set seed or reproducibility -- you don't need this unless you want full reproducibility

|

||||

>>> from transformers import set_seed

|

||||

>>> set_seed(42)

|

||||

|

||||

>>> model_inputs = tokenizer(["I am a cat."], return_tensors="pt").to("cuda")

|

||||

|

||||

>>> # LLM + greedy decoding = repetitive, boring output

|

||||

>>> generated_ids = model.generate(**model_inputs)

|

||||

>>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

||||

'I am a cat. I am a cat. I am a cat. I am a cat'

|

||||

|

||||

>>> # With sampling, the output becomes more creative!

|

||||

>>> generated_ids = model.generate(**model_inputs, do_sample=True)

|

||||

>>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

||||

'I am a cat. Specifically, I am an indoor-only cat. I'

|

||||

```

|

||||

|

||||

### 错误的填充位置

|

||||

|

||||

LLMs是[仅解码器](https://huggingface.co/learn/nlp-course/chapter1/6?fw=pt)架构,意味着它们会持续迭代您的输入提示。如果您的输入长度不相同,则需要对它们进行填充。由于LLMs没有接受过从`pad tokens`继续训练,因此您的输入需要左填充。确保在生成时不要忘记传递注意力掩码!

|

||||

|

||||

```py

|

||||

>>> # The tokenizer initialized above has right-padding active by default: the 1st sequence,

|

||||

>>> # which is shorter, has padding on the right side. Generation fails to capture the logic.

|

||||

>>> model_inputs = tokenizer(

|

||||

... ["1, 2, 3", "A, B, C, D, E"], padding=True, return_tensors="pt"

|

||||

... ).to("cuda")

|

||||

>>> generated_ids = model.generate(**model_inputs)

|

||||

>>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

||||

'1, 2, 33333333333'

|

||||

|

||||

>>> # With left-padding, it works as expected!

|

||||

>>> tokenizer = AutoTokenizer.from_pretrained("mistralai/Mistral-7B-v0.1", padding_side="left")

|

||||

>>> tokenizer.pad_token = tokenizer.eos_token # Most LLMs don't have a pad token by default

|

||||

>>> model_inputs = tokenizer(

|

||||

... ["1, 2, 3", "A, B, C, D, E"], padding=True, return_tensors="pt"

|

||||

... ).to("cuda")

|

||||

>>> generated_ids = model.generate(**model_inputs)

|

||||

>>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

||||

'1, 2, 3, 4, 5, 6,'

|

||||

```

|

||||

|

||||

### 错误的提示

|

||||

|

||||

一些模型和任务期望某种输入提示格式才能正常工作。当未应用此格式时,您将获得悄然的性能下降:模型能工作,但不如预期提示那样好。有关提示的更多信息,包括哪些模型和任务需要小心,可在[指南](tasks/prompting)中找到。让我们看一个使用[聊天模板](chat_templating)的聊天LLM示例:

|

||||

|

||||

```python

|

||||

>>> tokenizer = AutoTokenizer.from_pretrained("HuggingFaceH4/zephyr-7b-alpha")

|

||||

>>> model = AutoModelForCausalLM.from_pretrained(

|

||||

... "HuggingFaceH4/zephyr-7b-alpha", device_map="auto", load_in_4bit=True

|

||||

... )

|

||||

>>> set_seed(0)

|

||||

>>> prompt = """How many helicopters can a human eat in one sitting? Reply as a thug."""

|

||||

>>> model_inputs = tokenizer([prompt], return_tensors="pt").to("cuda")

|

||||

>>> input_length = model_inputs.input_ids.shape[1]

|

||||

>>> generated_ids = model.generate(**model_inputs, max_new_tokens=20)

|

||||

>>> print(tokenizer.batch_decode(generated_ids[:, input_length:], skip_special_tokens=True)[0])

|

||||

"I'm not a thug, but i can tell you that a human cannot eat"

|

||||

>>> # Oh no, it did not follow our instruction to reply as a thug! Let's see what happens when we write

|

||||

>>> # a better prompt and use the right template for this model (through `tokenizer.apply_chat_template`)

|

||||

|

||||

>>> set_seed(0)

|

||||

>>> messages = [

|

||||

... {

|

||||

... "role": "system",

|

||||

... "content": "You are a friendly chatbot who always responds in the style of a thug",

|

||||

... },

|

||||

... {"role": "user", "content": "How many helicopters can a human eat in one sitting?"},

|

||||

... ]

|

||||

>>> model_inputs = tokenizer.apply_chat_template(messages, add_generation_prompt=True, return_tensors="pt").to("cuda")

|

||||

>>> input_length = model_inputs.shape[1]

|

||||

>>> generated_ids = model.generate(model_inputs, do_sample=True, max_new_tokens=20)

|

||||

>>> print(tokenizer.batch_decode(generated_ids[:, input_length:], skip_special_tokens=True)[0])

|

||||

'None, you thug. How bout you try to focus on more useful questions?'

|

||||

>>> # As we can see, it followed a proper thug style 😎

|

||||

```

|

||||

|

||||

## 更多资源

|

||||

|

||||

虽然自回归生成过程相对简单,但要充分利用LLM可能是一个具有挑战性的任务,因为很多组件复杂且密切关联。以下是帮助您深入了解LLM使用和理解的下一步:

|

||||

|

||||

### 高级生成用法

|

||||

|

||||

1. [指南](generation_strategies),介绍如何控制不同的生成方法、如何设置生成配置文件以及如何进行输出流式传输;

|

||||

2. [指南](chat_templating),介绍聊天LLMs的提示模板;

|

||||

3. [指南](tasks/prompting),介绍如何充分利用提示设计;

|

||||

4. API参考文档,包括[`~generation.GenerationConfig`]、[`~generation.GenerationMixin.generate`]和[与生成相关的类](internal/generation_utils)。

|

||||

|

||||

### LLM排行榜

|

||||

|

||||

1. [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard), 侧重于开源模型的质量;

|

||||

2. [Open LLM-Perf Leaderboard](https://huggingface.co/spaces/optimum/llm-perf-leaderboard), 侧重于LLM的吞吐量.

|

||||

|

||||

### 延迟、吞吐量和内存利用率

|

||||

|

||||

1. [指南](llm_tutorial_optimization),如何优化LLMs以提高速度和内存利用;

|

||||

2. [指南](main_classes/quantization), 关于`quantization`,如bitsandbytes和autogptq的指南,教您如何大幅降低内存需求。

|

||||

|

||||

### 相关库

|

||||

|

||||

1. [`text-generation-inference`](https://github.com/huggingface/text-generation-inference), 一个面向生产的LLM服务器;

|

||||

2. [`optimum`](https://github.com/huggingface/optimum), 一个🤗 Transformers的扩展,优化特定硬件设备的性能

|

||||

238

docs/source/zh/model_sharing.md

Normal file

238

docs/source/zh/model_sharing.md

Normal file

@ -0,0 +1,238 @@

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

|

||||

⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

|

||||

rendered properly in your Markdown viewer.

|

||||

|

||||

-->

|

||||

|

||||

# 分享模型

|

||||

|

||||

最后两个教程展示了如何使用PyTorch、Keras和 🤗 Accelerate进行分布式设置来微调模型。下一步是将您的模型与社区分享!在Hugging Face,我们相信公开分享知识和资源,能实现人工智能的普及化,让每个人都能受益。我们鼓励您将您的模型与社区分享,以帮助他人节省时间和精力。

|

||||

|

||||

在本教程中,您将学习两种在[Model Hub](https://huggingface.co/models)上共享训练好的或微调的模型的方法:

|

||||

|

||||

- 通过编程将文件推送到Hub。

|

||||

- 使用Web界面将文件拖放到Hub。

|

||||

|

||||

<iframe width="560" height="315" src="https://www.youtube.com/embed/XvSGPZFEjDY" title="YouTube video player"

|

||||

frameborder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope;

|

||||

picture-in-picture" allowfullscreen></iframe>

|

||||

|

||||

<Tip>

|

||||

|

||||

要与社区共享模型,您需要在[huggingface.co](https://huggingface.co/join)上拥有一个帐户。您还可以加入现有的组织或创建一个新的组织。

|

||||

|

||||

</Tip>

|

||||

|

||||

## 仓库功能

|

||||

|

||||

Model Hub上的每个仓库都像是一个典型的GitHub仓库。我们的仓库提供版本控制、提交历史记录以及可视化差异的能力。

|

||||

|

||||

Model Hub的内置版本控制基于git和[git-lfs](https://git-lfs.github.com/)。换句话说,您可以将一个模型视为一个仓库,从而实现更好的访问控制和可扩展性。版本控制允许使用*修订*方法来固定特定版本的模型,可以使用提交哈希值、标签或分支来标记。

|

||||

|

||||

因此,您可以通过`revision`参数加载特定的模型版本:

|

||||

|

||||

```py

|

||||

>>> model = AutoModel.from_pretrained(

|

||||

... "julien-c/EsperBERTo-small", revision="v2.0.1" # tag name, or branch name, or commit hash

|

||||

... )

|

||||

```

|

||||

|

||||

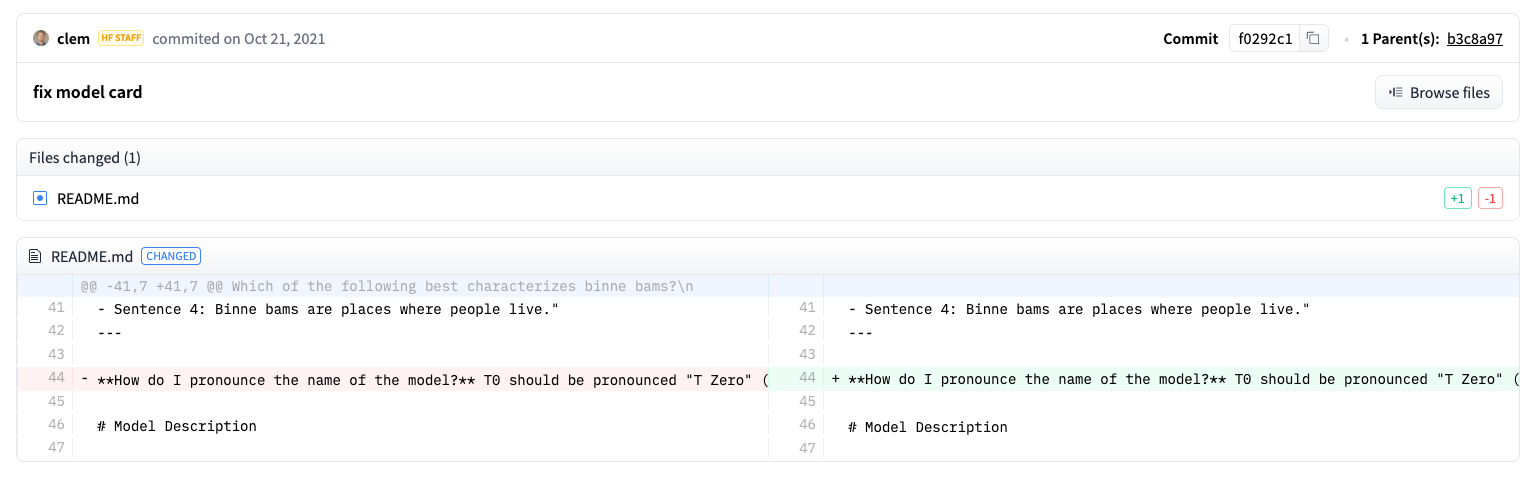

文件也可以轻松地在仓库中编辑,您可以查看提交历史记录以及差异:

|

||||

|

||||

|

||||

## 设置

|

||||

|

||||

在将模型共享到Hub之前,您需要拥有Hugging Face的凭证。如果您有访问终端的权限,请在安装🤗 Transformers的虚拟环境中运行以下命令。这将在您的Hugging Face缓存文件夹(默认为`~/.cache/`)中存储您的`access token`:

|

||||

|

||||

|

||||

```bash

|

||||

huggingface-cli login

|

||||

```

|

||||

|

||||

如果您正在使用像Jupyter或Colaboratory这样的`notebook`,请确保您已安装了[`huggingface_hub`](https://huggingface.co/docs/hub/adding-a-library)库。该库允许您以编程方式与Hub进行交互。

|

||||

|

||||

```bash

|

||||

pip install huggingface_hub

|

||||

```

|

||||

然后使用`notebook_login`登录到Hub,并按照[这里](https://huggingface.co/settings/token)的链接生成一个token进行登录:

|

||||

|

||||

|

||||

```py

|

||||

>>> from huggingface_hub import notebook_login

|

||||

|

||||

>>> notebook_login()

|

||||

```

|

||||

|

||||

## 转换模型适用于所有框架

|

||||

|

||||

为确保您的模型可以被使用不同框架的人使用,我们建议您将PyTorch和TensorFlow `checkpoints`都转换并上传。如果您跳过此步骤,用户仍然可以从其他框架加载您的模型,但速度会变慢,因为🤗 Transformers需要实时转换`checkpoints`。

|

||||

|

||||

为另一个框架转换`checkpoints`很容易。确保您已安装PyTorch和TensorFlow(请参阅[此处](installation)的安装说明),然后在其他框架中找到适合您任务的特定模型。

|

||||

|

||||

<frameworkcontent>

|

||||

<pt>

|

||||

|

||||

指定`from_tf=True`将checkpoint从TensorFlow转换为PyTorch。

|

||||

|

||||

```py

|

||||

>>> pt_model = DistilBertForSequenceClassification.from_pretrained("path/to/awesome-name-you-picked", from_tf=True)

|

||||

>>> pt_model.save_pretrained("path/to/awesome-name-you-picked")

|

||||

```

|

||||

</pt>

|

||||

<tf>

|

||||

|

||||

指定`from_pt=True`将checkpoint从PyTorch转换为TensorFlow。

|

||||

|

||||

```py

|

||||

>>> tf_model = TFDistilBertForSequenceClassification.from_pretrained("path/to/awesome-name-you-picked", from_pt=True)

|

||||

```

|

||||

|

||||

然后,您可以使用新的checkpoint保存您的新TensorFlow模型:

|

||||

|

||||

```py

|

||||

>>> tf_model.save_pretrained("path/to/awesome-name-you-picked")

|

||||

```

|

||||

</tf>

|

||||

<jax>

|

||||

|

||||

如果模型在Flax中可用,您还可以将PyTorch checkpoint转换为Flax:

|

||||

|

||||

```py

|

||||

>>> flax_model = FlaxDistilBertForSequenceClassification.from_pretrained(

|

||||

... "path/to/awesome-name-you-picked", from_pt=True

|

||||

... )

|

||||

```

|

||||

</jax>

|

||||

</frameworkcontent>

|

||||

|

||||

## 在训练过程中推送模型

|

||||

|

||||

<frameworkcontent>

|

||||

<pt>

|

||||

<Youtube id="Z1-XMy-GNLQ"/>

|

||||

|

||||

将模型分享到Hub就像添加一个额外的参数或回调函数一样简单。请记住,在[微调教程](training)中,`TrainingArguments`类是您指定超参数和附加训练选项的地方。其中一项训练选项包括直接将模型推送到Hub的能力。在您的`TrainingArguments`中设置`push_to_hub=True`:

|

||||

|

||||

|

||||

```py

|

||||

>>> training_args = TrainingArguments(output_dir="my-awesome-model", push_to_hub=True)

|

||||

```

|

||||

|

||||

像往常一样将您的训练参数传递给[`Trainer`]:

|

||||

|

||||

```py

|

||||

>>> trainer = Trainer(

|

||||

... model=model,

|

||||

... args=training_args,

|

||||

... train_dataset=small_train_dataset,

|

||||

... eval_dataset=small_eval_dataset,

|

||||

... compute_metrics=compute_metrics,

|

||||

... )

|

||||

```

|

||||

|

||||

在您微调完模型后,在[`Trainer`]上调用[`~transformers.Trainer.push_to_hub`]将训练好的模型推送到Hub。🤗 Transformers甚至会自动将训练超参数、训练结果和框架版本添加到你的模型卡片中!

|

||||

|

||||

```py

|

||||

>>> trainer.push_to_hub()

|

||||

```

|

||||

</pt>

|

||||

<tf>

|

||||

|

||||

使用[`PushToHubCallback`]将模型分享到Hub。在[`PushToHubCallback`]函数中,添加以下内容:

|

||||

|

||||

- 一个用于存储模型的输出目录。

|

||||

- 一个tokenizer。

|

||||

- `hub_model_id`,即您的Hub用户名和模型名称。

|

||||

|

||||

|

||||

```py

|

||||

>>> from transformers import PushToHubCallback

|

||||

|

||||

>>> push_to_hub_callback = PushToHubCallback(

|

||||

... output_dir="./your_model_save_path", tokenizer=tokenizer, hub_model_id="your-username/my-awesome-model"

|

||||

... )

|

||||

```

|

||||

|

||||

将回调函数添加到 [`fit`](https://keras.io/api/models/model_training_apis/)中,然后🤗 Transformers 会将训练好的模型推送到 Hub:

|

||||

|

||||

```py

|

||||

>>> model.fit(tf_train_dataset, validation_data=tf_validation_dataset, epochs=3, callbacks=push_to_hub_callback)

|

||||

```

|

||||

</tf>

|

||||

</frameworkcontent>

|

||||

|

||||

## 使用`push_to_hub`功能

|

||||

|

||||

您可以直接在您的模型上调用`push_to_hub`来将其上传到Hub。

|

||||

|

||||

在`push_to_hub`中指定你的模型名称:

|

||||

|

||||

```py

|

||||

>>> pt_model.push_to_hub("my-awesome-model")

|

||||

```

|

||||

|

||||

这会在您的用户名下创建一个名为`my-awesome-model`的仓库。用户现在可以使用`from_pretrained`函数加载您的模型:

|

||||

|

||||

```py

|

||||

>>> from transformers import AutoModel

|

||||

|

||||

>>> model = AutoModel.from_pretrained("your_username/my-awesome-model")

|

||||

```

|

||||

|

||||

如果您属于一个组织,并希望将您的模型推送到组织名称下,只需将其添加到`repo_id`中:

|

||||

|

||||

```py

|

||||

>>> pt_model.push_to_hub("my-awesome-org/my-awesome-model")

|

||||

```

|

||||

|

||||

`push_to_hub`函数还可以用于向模型仓库添加其他文件。例如,向模型仓库中添加一个`tokenizer`:

|

||||

|

||||

```py

|

||||

>>> tokenizer.push_to_hub("my-awesome-model")

|

||||

```

|

||||

|

||||

或者,您可能希望将您的微调后的PyTorch模型的TensorFlow版本添加进去:

|

||||

|

||||

```py

|

||||

>>> tf_model.push_to_hub("my-awesome-model")

|

||||

```

|

||||

现在,当您导航到您的Hugging Face个人资料时,您应该看到您新创建的模型仓库。点击**文件**选项卡将显示您已上传到仓库的所有文件。

|

||||

|

||||

有关如何创建和上传文件到仓库的更多详细信息,请参考Hub文档[这里](https://huggingface.co/docs/hub/how-to-upstream)。

|

||||

|

||||

|

||||

## 使用Web界面上传

|

||||

|

||||

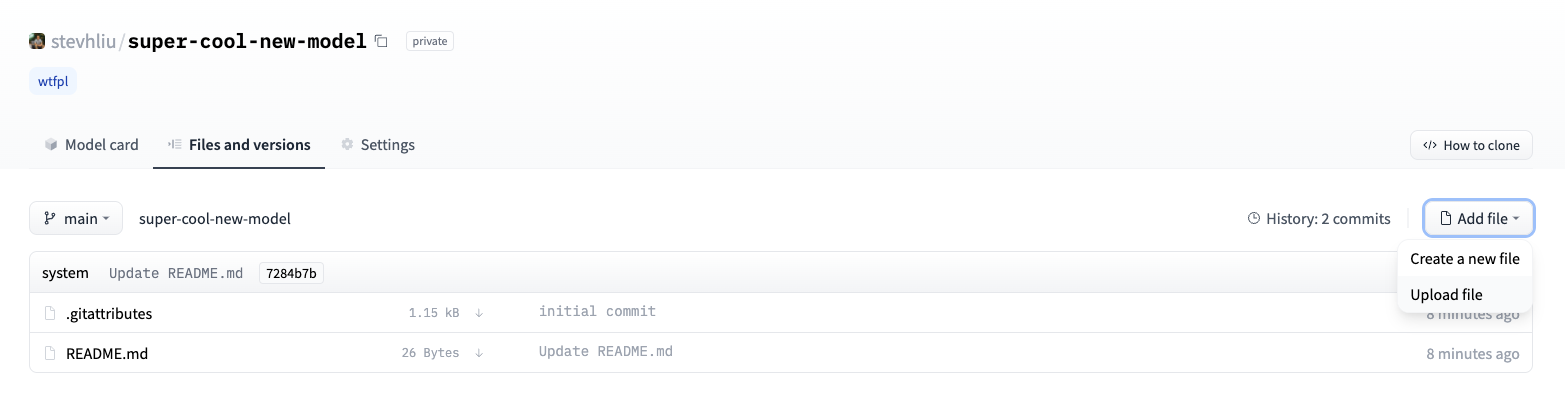

喜欢无代码方法的用户可以通过Hugging Face的Web界面上传模型。访问[huggingface.co/new](https://huggingface.co/new)创建一个新的仓库:

|

||||

|

||||

|

||||

|

||||

从这里开始,添加一些关于您的模型的信息:

|

||||

|

||||

- 选择仓库的**所有者**。这可以是您本人或者您所属的任何组织。

|

||||

- 为您的项目选择一个名称,该名称也将成为仓库的名称。

|

||||

- 选择您的模型是公开还是私有。

|

||||

- 指定您的模型的许可证使用情况。

|

||||

|

||||

现在点击**文件**选项卡,然后点击**添加文件**按钮将一个新文件上传到你的仓库。接着拖放一个文件进行上传,并添加提交信息。

|

||||

|

||||

|

||||

|

||||

## 添加模型卡片

|

||||

|

||||

为了确保用户了解您的模型的能力、限制、潜在偏差和伦理考虑,请在仓库中添加一个模型卡片。模型卡片在`README.md`文件中定义。你可以通过以下方式添加模型卡片:

|

||||

|

||||

* 手动创建并上传一个`README.md`文件。

|

||||

* 在你的模型仓库中点击**编辑模型卡片**按钮。

|

||||

|

||||

可以参考DistilBert的[模型卡片](https://huggingface.co/distilbert-base-uncased)来了解模型卡片应该包含的信息类型。有关您可以在`README.md`文件中控制的更多选项的细节,例如模型的碳足迹或小部件示例,请参考文档[这里](https://huggingface.co/docs/hub/models-cards)。

|

||||

Loading…

Reference in New Issue

Block a user