mirror of

https://github.com/huggingface/transformers.git

synced 2025-07-31 02:02:21 +06:00

Update model share tutorial (#15288)

* add model sharing tutorial * 🖍 apply feedback from review * 📝 make edits * 🖍 fix formatting * 📝 convert from pt checkpoint to flax * 📝 final review

This commit is contained in:

parent

c98a6ac211

commit

cabd6d26a2

@ -22,7 +22,7 @@

|

||||

- local: accelerate

|

||||

title: Distributed training with 🤗 Accelerate

|

||||

- local: model_sharing

|

||||

title: Model sharing and uploading

|

||||

title: Share a model

|

||||

- local: tokenizer_summary

|

||||

title: Summary of the tokenizers

|

||||

- local: multilingual

|

||||

|

||||

@ -1,4 +1,4 @@

|

||||

<!--Copyright 2020 The HuggingFace Team. All rights reserved.

|

||||

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

@ -10,10 +10,14 @@ an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express o

|

||||

specific language governing permissions and limitations under the License.

|

||||

-->

|

||||

|

||||

# Model sharing and uploading

|

||||

# Share a model

|

||||

|

||||

In this page, we will show you how to share a model you have trained or fine-tuned on new data with the community on

|

||||

the [model hub](https://huggingface.co/models).

|

||||

The last two tutorials showed how you can fine-tune a model with PyTorch, Keras, and 🤗 Accelerate for distributed setups. The next step is to share your model with the community! At Hugging Face, we believe in openly sharing knowledge and resources to democratize artificial intelligence for everyone. We encourage you to consider sharing your model with the community to help others save time and resources.

|

||||

|

||||

In this tutorial, you will learn two methods for sharing a trained or fine-tuned model on the [Model Hub](https://huggingface.co/models):

|

||||

|

||||

- Programmatically push your files to the Hub.

|

||||

- Drag-and-drop your files to the Hub with the web interface.

|

||||

|

||||

<iframe width="560" height="315" src="https://www.youtube.com/embed/XvSGPZFEjDY" title="YouTube video player"

|

||||

frameborder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope;

|

||||

@ -21,373 +25,195 @@ picture-in-picture" allowfullscreen></iframe>

|

||||

|

||||

<Tip>

|

||||

|

||||

You will need to create an account on [huggingface.co](https://huggingface.co/join) for this.

|

||||

|

||||

Optionally, you can join an existing organization or create a new one.

|

||||

To share a model with the community, you need an account on [huggingface.co](https://huggingface.co/join). You can also join an existing organization or create a new one.

|

||||

|

||||

</Tip>

|

||||

|

||||

We have seen in the [training tutorial](training): how to fine-tune a model on a given task. You have probably

|

||||

done something similar on your task, either using the model `fit()` method, directly in your own training loop or using the

|

||||

[`Trainer`] class. Let's see how you can share the result on the

|

||||

[model hub](https://huggingface.co/models).

|

||||

## Repository features

|

||||

|

||||

## Model versioning

|

||||

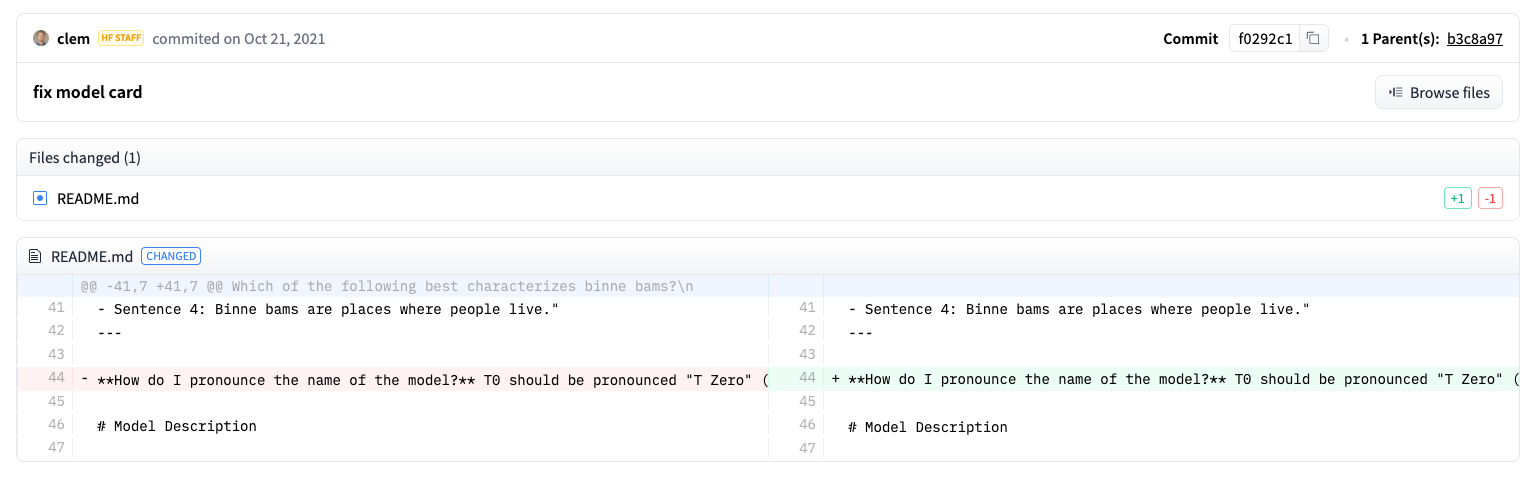

Each repository on the Model Hub behaves like a typical GitHub repository. Our repositories offer versioning, commit history, and the ability to visualize differences.

|

||||

|

||||

Since version v3.5.0, the model hub has built-in model versioning based on git and git-lfs. It is based on the paradigm

|

||||

that one model *is* one repo.

|

||||

The Model Hub's built-in versioning is based on git and [git-lfs](https://git-lfs.github.com/). In other words, you can treat one model as one repository, enabling greater access control and scalability. Version control allows *revisions*, a method for pinning a specific version of a model with a commit hash, tag or branch.

|

||||

|

||||

This allows:

|

||||

As a result, you can load a specific model version with the `revision` parameter:

|

||||

|

||||

- built-in versioning

|

||||

- access control

|

||||

- scalability

|

||||

|

||||

This is built around *revisions*, which is a way to pin a specific version of a model, using a commit hash, tag or

|

||||

branch.

|

||||

|

||||

For instance:

|

||||

|

||||

```python

|

||||

```py

|

||||

>>> model = AutoModel.from_pretrained(

|

||||

... "julien-c/EsperBERTo-small", revision="v2.0.1" # tag name, or branch name, or commit hash

|

||||

... )

|

||||

```

|

||||

|

||||

## Push your model from Python

|

||||

Files are also easily edited in a repository, and you can view the commit history as well as the difference:

|

||||

|

||||

### Preparation

|

||||

|

||||

|

||||

The first step is to make sure your credentials to the hub are stored somewhere. This can be done in two ways. If you

|

||||

have access to a terminal, you can just run the following command in the virtual environment where you installed 🤗

|

||||

Transformers:

|

||||

## Setup

|

||||

|

||||

Before sharing a model to the Hub, you will need your Hugging Face credentials. If you have access to a terminal, run the following command in the virtual environment where 🤗 Transformers is installed. This will store your access token in your Hugging Face cache folder (`~/.cache/` by default):

|

||||

|

||||

```bash

|

||||

huggingface-cli login

|

||||

```

|

||||

|

||||

It will store your access token in the Hugging Face cache folder (by default `~/.cache/`).

|

||||

|

||||

If you don't have an easy access to a terminal (for instance in a Colab session), you can find a token linked to your

|

||||

account by going on [huggingface.co](https://huggingface.co/), click on your avatar on the top left corner, then on

|

||||

*Edit profile* on the left, just beneath your profile picture. In the submenu *API Tokens*, you will find your API

|

||||

token that you can just copy.

|

||||

|

||||

### Directly push your model to the hub

|

||||

|

||||

<Youtube id="Z1-XMy-GNLQ"/>

|

||||

|

||||

Once you have an API token (either stored in the cache or copied and pasted in your notebook), you can directly push a

|

||||

finetuned model you saved in `save_directory` by calling:

|

||||

|

||||

```python

|

||||

finetuned_model.push_to_hub("my-awesome-model")

|

||||

```

|

||||

|

||||

If you have your API token not stored in the cache, you will need to pass it with `use_auth_token=your_token`.

|

||||

This is also be the case for all the examples below, so we won't mention it again.

|

||||

|

||||

This will create a repository in your namespace name `my-awesome-model`, so anyone can now run:

|

||||

|

||||

```python

|

||||

from transformers import AutoModel

|

||||

|

||||

model = AutoModel.from_pretrained("your_username/my-awesome-model")

|

||||

```

|

||||

|

||||

Even better, you can combine this push to the hub with the call to `save_pretrained`:

|

||||

|

||||

```python

|

||||

finetuned_model.save_pretrained(save_directory, push_to_hub=True, repo_name="my-awesome-model")

|

||||

```

|

||||

|

||||

If you are a premium user and want your model to be private, just add `private=True` to this call.

|

||||

|

||||

If you are a member of an organization and want to push it inside the namespace of the organization instead of yours,

|

||||

just add `organization=my_amazing_org`.

|

||||

|

||||

### Add new files to your model repo

|

||||

|

||||

Once you have pushed your model to the hub, you might want to add the tokenizer, or a version of your model for another

|

||||

framework (TensorFlow, PyTorch, Flax). This is super easy to do! Let's begin with the tokenizer. You can add it to the

|

||||

repo you created before like this

|

||||

|

||||

```python

|

||||

tokenizer.push_to_hub("my-awesome-model")

|

||||

```

|

||||

|

||||

If you know its URL (it should be `https://huggingface.co/username/repo_name`), you can also do:

|

||||

|

||||

```python

|

||||

tokenizer.push_to_hub(repo_url=my_repo_url)

|

||||

```

|

||||

|

||||

And that's all there is to it! It's also a very easy way to fix a mistake if one of the files online had a bug.

|

||||

|

||||

To add a model for another backend, it's also super easy. Let's say you have fine-tuned a TensorFlow model and want to

|

||||

add the pytorch model files to your model repo, so that anyone in the community can use it. The following allows you to

|

||||

directly create a PyTorch version of your TensorFlow model:

|

||||

|

||||

```python

|

||||

from transformers import AutoModel

|

||||

|

||||

model = AutoModel.from_pretrained(save_directory, from_tf=True)

|

||||

```

|

||||

|

||||

You can also replace `save_directory` by the identifier of your model (`username/repo_name`) if you don't

|

||||

have a local save of it anymore. Then, just do the same as before:

|

||||

|

||||

```python

|

||||

model.push_to_hub("my-awesome-model")

|

||||

```

|

||||

|

||||

or

|

||||

|

||||

```python

|

||||

model.push_to_hub(repo_url=my_repo_url)

|

||||

```

|

||||

|

||||

## Use your terminal and git

|

||||

|

||||

<Youtube id="rkCly_cbMBk"/>

|

||||

|

||||

### Basic steps

|

||||

|

||||

In order to upload a model, you'll need to first create a git repo. This repo will live on the model hub, allowing

|

||||

users to clone it and you (and your organization members) to push to it.

|

||||

|

||||

You can create a model repo directly from [the /new page on the website](https://huggingface.co/new).

|

||||

|

||||

Alternatively, you can use the `transformers-cli`. The next steps describe that process:

|

||||

|

||||

Go to a terminal and run the following command. It should be in the virtual environment where you installed 🤗

|

||||

Transformers, since that command `transformers-cli` comes from the library.

|

||||

If you are using a notebook like Jupyter or Colaboratory, make sure you have the [`huggingface_hub`](https://huggingface.co/docs/hub/adding-a-library) library installed. This library allows you to programmatically interact with the Hub.

|

||||

|

||||

```bash

|

||||

transformers-cli login

|

||||

pip install huggingface_hub

|

||||

```

|

||||

|

||||

Once you are logged in with your model hub credentials, you can start building your repositories. To create a repo:

|

||||

Then use `notebook_login` to sign-in to the Hub, and follow the link [here](https://huggingface.co/settings/token) to generate a token to login with:

|

||||

|

||||

```bash

|

||||

transformers-cli repo create your-model-name

|

||||

```py

|

||||

>>> from huggingface_hub import notebook_login

|

||||

|

||||

>>> notebook_login()

|

||||

```

|

||||

|

||||

If you want to create a repo under a specific organization, you should add a *--organization* flag:

|

||||

## Convert a model for all frameworks

|

||||

|

||||

```bash

|

||||

transformers-cli repo create your-model-name --organization your-org-name

|

||||

```

|

||||

To ensure your model can be used by someone working with a different framework, we recommend you convert and upload your model with both PyTorch and TensorFlow checkpoints. While users are still able to load your model from a different framework if you skip this step, it will be slower because 🤗 Transformers will need to convert the checkpoint on-the-fly.

|

||||

|

||||

This creates a repo on the model hub, which can be cloned.

|

||||

Converting a checkpoint for another framework is easy. Make sure you have PyTorch and TensorFlow installed (see [here](installation) for installation instructions), and then find the specific model for your task in the other framework.

|

||||

|

||||

```bash

|

||||

# Make sure you have git-lfs installed

|

||||

# (https://git-lfs.github.com/)

|

||||

git lfs install

|

||||

For example, suppose you trained DistilBert for sequence classification in PyTorch and want to convert it to it's TensorFlow equivalent. Load the TensorFlow equivalent of your model for your task, and specify `from_pt=True` so 🤗 Transformers will convert the PyTorch checkpoint to a TensorFlow checkpoint:

|

||||

|

||||

git clone https://huggingface.co/username/your-model-name

|

||||

```

|

||||

|

||||

When you have your local clone of your repo and lfs installed, you can then add/remove from that clone as you would

|

||||

with any other git repo.

|

||||

|

||||

```bash

|

||||

# Commit as usual

|

||||

cd your-model-name

|

||||

echo "hello" >> README.md

|

||||

git add . && git commit -m "Update from $USER"

|

||||

```

|

||||

|

||||

We are intentionally not wrapping git too much, so that you can go on with the workflow you're used to and the tools

|

||||

you already know.

|

||||

|

||||

The only learning curve you might have compared to regular git is the one for git-lfs. The documentation at

|

||||

[git-lfs.github.com](https://git-lfs.github.com/) is decent, but we'll work on a tutorial with some tips and tricks

|

||||

in the coming weeks!

|

||||

|

||||

Additionally, if you want to change multiple repos at once, the [change_config.py script](https://github.com/huggingface/efficient_scripts/blob/main/change_config.py) can probably save you some time.

|

||||

|

||||

### Make your model work on all frameworks

|

||||

|

||||

<!--TODO Sylvain: make this automatic during the upload

|

||||

-->

|

||||

|

||||

You probably have your favorite framework, but so will other users! That's why it's best to upload your model with both

|

||||

PyTorch *and* TensorFlow checkpoints to make it easier to use (if you skip this step, users will still be able to load

|

||||

your model in another framework, but it will be slower, as it will have to be converted on the fly). Don't worry, it's

|

||||

super easy to do (and in a future version, it might all be automatic). You will need to install both PyTorch and

|

||||

TensorFlow for this step, but you don't need to worry about the GPU, so it should be very easy. Check the [TensorFlow

|

||||

installation page](https://www.tensorflow.org/install/pip#tensorflow-2.0-rc-is-available) and/or the [PyTorch

|

||||

installation page](https://pytorch.org/get-started/locally/#start-locally) to see how.

|

||||

|

||||

First check that your model class exists in the other framework, that is try to import the same model by either adding

|

||||

or removing TF. For instance, if you trained a [`DistilBertForSequenceClassification`], try to type

|

||||

|

||||

```python

|

||||

>>> from transformers import TFDistilBertForSequenceClassification

|

||||

```

|

||||

|

||||

and if you trained a [`TFDistilBertForSequenceClassification`], try to type

|

||||

|

||||

```python

|

||||

>>> from transformers import DistilBertForSequenceClassification

|

||||

```

|

||||

|

||||

This will give back an error if your model does not exist in the other framework (something that should be pretty rare

|

||||

since we're aiming for full parity between the two frameworks). In this case, skip this and go to the next step.

|

||||

|

||||

Now, if you trained your model in PyTorch and have to create a TensorFlow version, adapt the following code to your

|

||||

model class:

|

||||

|

||||

```python

|

||||

```py

|

||||

>>> tf_model = TFDistilBertForSequenceClassification.from_pretrained("path/to/awesome-name-you-picked", from_pt=True)

|

||||

```

|

||||

|

||||

Then save your new TensorFlow model with it's new checkpoint:

|

||||

|

||||

```py

|

||||

>>> tf_model.save_pretrained("path/to/awesome-name-you-picked")

|

||||

```

|

||||

|

||||

and if you trained your model in TensorFlow and have to create a PyTorch version, adapt the following code to your

|

||||

model class:

|

||||

Similarly, specify `from_tf=True` to convert a checkpoint from TensorFlow to PyTorch:

|

||||

|

||||

```python

|

||||

```py

|

||||

>>> pt_model = DistilBertForSequenceClassification.from_pretrained("path/to/awesome-name-you-picked", from_tf=True)

|

||||

>>> pt_model.save_pretrained("path/to/awesome-name-you-picked")

|

||||

```

|

||||

|

||||

That's all there is to it!

|

||||

If a model is available in Flax, you can also convert a checkpoint from PyTorch to Flax:

|

||||

|

||||

### Check the directory before pushing to the model hub.

|

||||

|

||||

Make sure there are no garbage files in the directory you'll upload. It should only have:

|

||||

|

||||

- a *config.json* file, which saves the [configuration](main_classes/configuration) of your model ;

|

||||

- a *pytorch_model.bin* file, which is the PyTorch checkpoint (unless you can't have it for some reason) ;

|

||||

- a *tf_model.h5* file, which is the TensorFlow checkpoint (unless you can't have it for some reason) ;

|

||||

- a *special_tokens_map.json*, which is part of your [tokenizer](main_classes/tokenizer) save;

|

||||

- a *tokenizer_config.json*, which is part of your [tokenizer](main_classes/tokenizer) save;

|

||||

- files named *vocab.json*, *vocab.txt*, *merges.txt*, or similar, which contain the vocabulary of your tokenizer, part

|

||||

of your [tokenizer](main_classes/tokenizer) save;

|

||||

- maybe a *added_tokens.json*, which is part of your [tokenizer](main_classes/tokenizer) save.

|

||||

|

||||

Other files can safely be deleted.

|

||||

|

||||

|

||||

## Uploading your files

|

||||

|

||||

Once the repo is cloned, you can add the model, configuration and tokenizer files. For instance, saving the model and

|

||||

tokenizer files:

|

||||

|

||||

```python

|

||||

>>> model.save_pretrained("path/to/repo/clone/your-model-name")

|

||||

>>> tokenizer.save_pretrained("path/to/repo/clone/your-model-name")

|

||||

```

|

||||

|

||||

Or, if you're using the Trainer API

|

||||

|

||||

```python

|

||||

>>> trainer.save_model("path/to/awesome-name-you-picked")

|

||||

>>> tokenizer.save_pretrained("path/to/repo/clone/your-model-name")

|

||||

```

|

||||

|

||||

You can then add these files to the staging environment and verify that they have been correctly staged with the `git status` command:

|

||||

|

||||

```bash

|

||||

git add --all

|

||||

git status

|

||||

```

|

||||

|

||||

Finally, the files should be committed:

|

||||

|

||||

```bash

|

||||

git commit -m "First version of the your-model-name model and tokenizer."

|

||||

```

|

||||

|

||||

And pushed to the remote:

|

||||

|

||||

```bash

|

||||

git push

|

||||

```

|

||||

|

||||

This will upload the folder containing the weights, tokenizer and configuration we have just prepared.

|

||||

|

||||

|

||||

### Add a model card

|

||||

|

||||

To make sure everyone knows what your model can do, what its limitations, potential bias or ethical considerations are,

|

||||

please add a README.md model card to your model repo. You can just create it, or there's also a convenient button

|

||||

titled "Add a README.md" on your model page. A model card documentation can be found [here](https://huggingface.co/docs/hub/model-repos) (meta-suggestions are welcome). model card template (meta-suggestions

|

||||

are welcome).

|

||||

|

||||

<Tip>

|

||||

|

||||

Model cards used to live in the 🤗 Transformers repo under *model_cards/*, but for consistency and scalability we

|

||||

migrated every model card from the repo to its corresponding huggingface.co model repo.

|

||||

|

||||

</Tip>

|

||||

|

||||

If your model is fine-tuned from another model coming from the model hub (all 🤗 Transformers pretrained models do),

|

||||

don't forget to link to its model card so that people can fully trace how your model was built.

|

||||

|

||||

|

||||

### Using your model

|

||||

|

||||

Your model now has a page on huggingface.co/models 🔥

|

||||

|

||||

Anyone can load it from code:

|

||||

|

||||

```python

|

||||

>>> tokenizer = AutoTokenizer.from_pretrained("namespace/awesome-name-you-picked")

|

||||

>>> model = AutoModel.from_pretrained("namespace/awesome-name-you-picked")

|

||||

```

|

||||

|

||||

You may specify a revision by using the `revision` flag in the `from_pretrained` method:

|

||||

|

||||

```python

|

||||

>>> tokenizer = AutoTokenizer.from_pretrained(

|

||||

... "julien-c/EsperBERTo-small", revision="v2.0.1" # tag name, or branch name, or commit hash

|

||||

```py

|

||||

>>> flax_model = FlaxDistilBertForSequenceClassification.from_pretrained(

|

||||

... "path/to/awesome-name-you-picked", from_pt=True

|

||||

... )

|

||||

```

|

||||

|

||||

## Workflow in a Colab notebook

|

||||

## Push a model with `Trainer`

|

||||

|

||||

If you're in a Colab notebook (or similar) with no direct access to a terminal, here is the workflow you can use to

|

||||

upload your model. You can execute each one of them in a cell by adding a ! at the beginning.

|

||||

<Youtube id="Z1-XMy-GNLQ"/>

|

||||

|

||||

First you need to install *git-lfs* in the environment used by the notebook:

|

||||

Sharing a model to the Hub is as simple as adding an extra parameter or callback. Remember from the [fine-tuning tutorial](training), the [`TrainingArguments`] class is where you specify hyperparameters and additional training options. One of these training options includes the ability to push a model directly to the Hub. Set `push_to_hub=True` in your [`TrainingArguments`]:

|

||||

|

||||

```bash

|

||||

sudo apt-get install git-lfs

|

||||

```py

|

||||

>>> training_args = TrainingArguments(output_dir="my-awesome-model", push_to_hub=True)

|

||||

```

|

||||

|

||||

Then you can use either create a repo directly from [huggingface.co](https://huggingface.co/) , or use the

|

||||

`transformers-cli` to create it:

|

||||

Pass your training arguments as usual to [`Trainer`]:

|

||||

|

||||

|

||||

```bash

|

||||

transformers-cli login

|

||||

transformers-cli repo create your-model-name

|

||||

```py

|

||||

>>> trainer = Trainer(

|

||||

... model=model,

|

||||

... args=training_args,

|

||||

... train_dataset=small_train_dataset,

|

||||

... eval_dataset=small_eval_dataset,

|

||||

... compute_metrics=compute_metrics,

|

||||

... )

|

||||

```

|

||||

|

||||

Once it's created, you can clone it and configure it (replace username by your username on huggingface.co):

|

||||

After you fine-tune your model, call [`~transformers.Trainer.push_to_hub`] on [`Trainer`] to push the trained model to the Hub. 🤗 Transformers will even automatically add training hyperparameters, training results and framework versions to your model card!

|

||||

|

||||

```bash

|

||||

git lfs install

|

||||

|

||||

git clone https://username:password@huggingface.co/username/your-model-name

|

||||

# Alternatively if you have a token,

|

||||

# you can use it instead of your password

|

||||

git clone https://username:token@huggingface.co/username/your-model-name

|

||||

|

||||

cd your-model-name

|

||||

git config --global user.email "email@example.com"

|

||||

# Tip: using the same email than for your huggingface.co account will link your commits to your profile

|

||||

git config --global user.name "Your name"

|

||||

```py

|

||||

>>> trainer.push_to_hub()

|

||||

```

|

||||

|

||||

Once you've saved your model inside, and your clone is setup with the right remote URL, you can add it and push it with

|

||||

usual git commands.

|

||||

## Push a model with `PushToHubCallback`

|

||||

|

||||

```bash

|

||||

git add .

|

||||

git commit -m "Initial commit"

|

||||

git push

|

||||

TensorFlow users can enable the same functionality with [`PushToHubCallback`]. In the [`PushToHubCallback`] function, add:

|

||||

|

||||

- An output directory for your model.

|

||||

- A tokenizer.

|

||||

- The `hub_model_id`, which is your Hub username and model name.

|

||||

|

||||

```py

|

||||

>>> from transformers.keras.callbacks import PushToHubCallback

|

||||

|

||||

>>> push_to_hub_callback = PushToHubCallback(

|

||||

... output_dir="./your_model_save_path", tokenizer=tokenizer, hub_model_id="your-username/my-awesome-model"

|

||||

... )

|

||||

```

|

||||

|

||||

Add the callback to [`fit`](https://keras.io/api/models/model_training_apis/), and 🤗 Transformers will push the trained model to the Hub:

|

||||

|

||||

```py

|

||||

>>> model.fit(tf_train_dataset, validation_data=tf_validation_dataset, epochs=3, callbacks=push_to_hub_callback)

|

||||

```

|

||||

|

||||

## Use the `push_to_hub` function

|

||||

|

||||

You can also call `push_to_hub` directly on your model to upload it to the Hub.

|

||||

|

||||

Specify your model name in `push_to_hub`:

|

||||

|

||||

```py

|

||||

>>> pt_model.push_to_hub("my-awesome-model")

|

||||

```

|

||||

|

||||

This creates a repository under your username with the model name `my-awesome-model`. Users can now load your model with the `from_pretrained` function:

|

||||

|

||||

```py

|

||||

>>> from transformers import AutoModel

|

||||

|

||||

>>> model = AutoModel.from_pretrained("your_username/my-awesome-model")

|

||||

```

|

||||

|

||||

If you belong to an organization and want to push your model under the organization name instead, add the `organization` parameter:

|

||||

|

||||

```py

|

||||

>>> pt_model.push_to_hub("my-awesome-model", organization="my-awesome-org")

|

||||

```

|

||||

|

||||

The `push_to_hub` function can also be used to add other files to a model repository. For example, add a tokenizer to a model repository:

|

||||

|

||||

```py

|

||||

>>> tokenizer.push_to_hub("my-awesome-model")

|

||||

```

|

||||

|

||||

Or perhaps you'd like to add the TensorFlow version of your fine-tuned PyTorch model:

|

||||

|

||||

```py

|

||||

>>> tf_model.push_to_hub("my-awesome-model")

|

||||

```

|

||||

|

||||

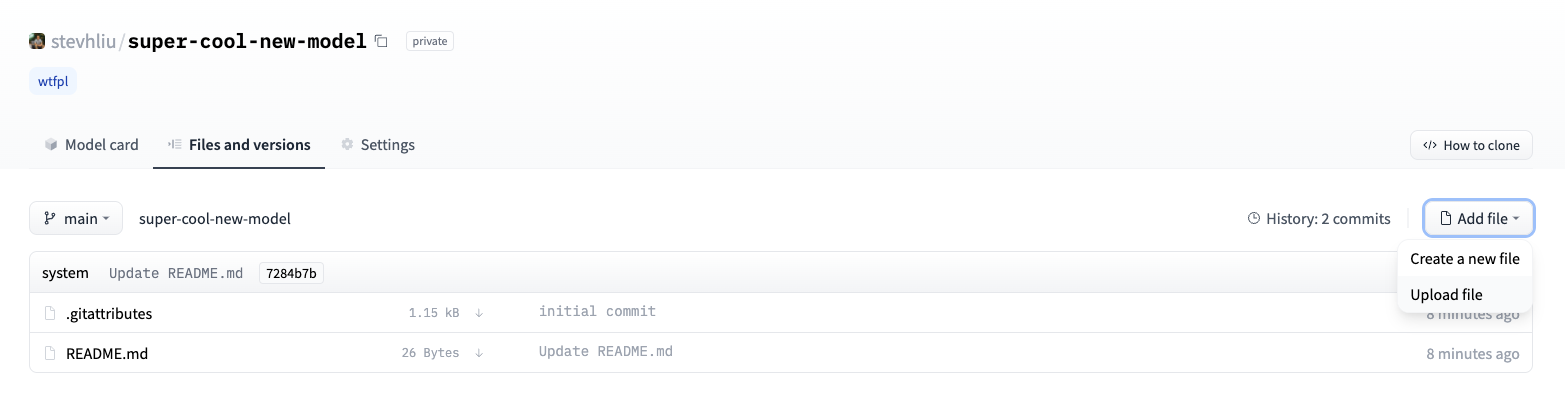

Now when you navigate to the your Hugging Face profile, you should see your newly created model repository. Clicking on the **Files** tab will display all the files you've uploaded to the repository.

|

||||

|

||||

For more details on how to create and upload files to a repository, refer to the Hub documentation [here](https://huggingface.co/docs/hub/how-to-upstream).

|

||||

|

||||

## Upload with the web interface

|

||||

|

||||

Users who prefer a no-code approach are able to upload a model through the Hub's web interface. Visit [huggingface.co/new](https://huggingface.co/new) to create a new repository:

|

||||

|

||||

|

||||

|

||||

From here, add some information about your model:

|

||||

|

||||

- Select the **owner** of the repository. This can be yourself or any of the organizations you belong to.

|

||||

- Pick a name for your model, which will also be the repository name.

|

||||

- Choose whether your model is public or private.

|

||||

- Specify the license usage for your model.

|

||||

|

||||

Now click on the **Files** tab and click on the **Add file** button to upload a new file to your repository. Then drag-and-drop a file to upload and add a commit message.

|

||||

|

||||

|

||||

|

||||

## Add a model card

|

||||

|

||||

To make sure users understand your model's capabilities, limitations, potential biases and ethical considerations, please add a model card to your repository. The model card is defined in the `README.md` file. You can add a model card by:

|

||||

|

||||

* Manually creating and uploading a `README.md` file.

|

||||

* Clicking on the **Edit model card** button in your model repository.

|

||||

|

||||

Take a look at the DistilBert [model card](https://huggingface.co/distilbert-base-uncased) for a good example of the type of information a model card should include. For more details about other options you can control in the `README.md` file such as a model's carbon footprint or widget examples, refer to the documentation [here](https://huggingface.co/docs/hub/model-repos).

|

||||

|

||||

Loading…

Reference in New Issue

Block a user