diff --git a/README.md b/README.md

index 5e17e33b204..ea026159803 100644

--- a/README.md

+++ b/README.md

@@ -328,6 +328,7 @@ Current number of checkpoints: ** (from Facebook) released with the paper [Pseudo-Labeling For Massively Multilingual Speech Recognition](https://arxiv.org/abs/2111.00161) by Loren Lugosch, Tatiana Likhomanenko, Gabriel Synnaeve, and Ronan Collobert.

1. **[M2M100](https://huggingface.co/docs/transformers/model_doc/m2m_100)** (from Facebook) released with the paper [Beyond English-Centric Multilingual Machine Translation](https://arxiv.org/abs/2010.11125) by Angela Fan, Shruti Bhosale, Holger Schwenk, Zhiyi Ma, Ahmed El-Kishky, Siddharth Goyal, Mandeep Baines, Onur Celebi, Guillaume Wenzek, Vishrav Chaudhary, Naman Goyal, Tom Birch, Vitaliy Liptchinsky, Sergey Edunov, Edouard Grave, Michael Auli, Armand Joulin.

1. **[MarianMT](https://huggingface.co/docs/transformers/model_doc/marian)** Machine translation models trained using [OPUS](http://opus.nlpl.eu/) data by Jörg Tiedemann. The [Marian Framework](https://marian-nmt.github.io/) is being developed by the Microsoft Translator Team.

+1. **[MarkupLM](https://huggingface.co/docs/transformers/main/model_doc/markuplm)** (from Microsoft Research Asia) released with the paper [MarkupLM: Pre-training of Text and Markup Language for Visually-rich Document Understanding](https://arxiv.org/abs/2110.08518) by Junlong Li, Yiheng Xu, Lei Cui, Furu Wei.

1. **[MaskFormer](https://huggingface.co/docs/transformers/model_doc/maskformer)** (from Meta and UIUC) released with the paper [Per-Pixel Classification is Not All You Need for Semantic Segmentation](https://arxiv.org/abs/2107.06278) by Bowen Cheng, Alexander G. Schwing, Alexander Kirillov.

1. **[mBART](https://huggingface.co/docs/transformers/model_doc/mbart)** (from Facebook) released with the paper [Multilingual Denoising Pre-training for Neural Machine Translation](https://arxiv.org/abs/2001.08210) by Yinhan Liu, Jiatao Gu, Naman Goyal, Xian Li, Sergey Edunov, Marjan Ghazvininejad, Mike Lewis, Luke Zettlemoyer.

1. **[mBART-50](https://huggingface.co/docs/transformers/model_doc/mbart)** (from Facebook) released with the paper [Multilingual Translation with Extensible Multilingual Pretraining and Finetuning](https://arxiv.org/abs/2008.00401) by Yuqing Tang, Chau Tran, Xian Li, Peng-Jen Chen, Naman Goyal, Vishrav Chaudhary, Jiatao Gu, Angela Fan.

diff --git a/README_ko.md b/README_ko.md

index f53075ff5fe..e7a0d9d2960 100644

--- a/README_ko.md

+++ b/README_ko.md

@@ -278,6 +278,7 @@ Flax, PyTorch, TensorFlow 설치 페이지에서 이들을 conda로 설치하는

1. **[M-CTC-T](https://huggingface.co/docs/transformers/model_doc/mctct)** (from Facebook) released with the paper [Pseudo-Labeling For Massively Multilingual Speech Recognition](https://arxiv.org/abs/2111.00161) by Loren Lugosch, Tatiana Likhomanenko, Gabriel Synnaeve, and Ronan Collobert.

1. **[M2M100](https://huggingface.co/docs/transformers/model_doc/m2m_100)** (from Facebook) released with the paper [Beyond English-Centric Multilingual Machine Translation](https://arxiv.org/abs/2010.11125) by Angela Fan, Shruti Bhosale, Holger Schwenk, Zhiyi Ma, Ahmed El-Kishky, Siddharth Goyal, Mandeep Baines, Onur Celebi, Guillaume Wenzek, Vishrav Chaudhary, Naman Goyal, Tom Birch, Vitaliy Liptchinsky, Sergey Edunov, Edouard Grave, Michael Auli, Armand Joulin.

1. **[MarianMT](https://huggingface.co/docs/transformers/model_doc/marian)** Machine translation models trained using [OPUS](http://opus.nlpl.eu/) data by Jörg Tiedemann. The [Marian Framework](https://marian-nmt.github.io/) is being developed by the Microsoft Translator Team.

+1. **[MarkupLM](https://huggingface.co/docs/transformers/main/model_doc/markuplm)** (from Microsoft Research Asia) released with the paper [MarkupLM: Pre-training of Text and Markup Language for Visually-rich Document Understanding](https://arxiv.org/abs/2110.08518) by Junlong Li, Yiheng Xu, Lei Cui, Furu Wei.

1. **[MaskFormer](https://huggingface.co/docs/transformers/model_doc/maskformer)** (from Meta and UIUC) released with the paper [Per-Pixel Classification is Not All You Need for Semantic Segmentation](https://arxiv.org/abs/2107.06278) by Bowen Cheng, Alexander G. Schwing, Alexander Kirillov.

1. **[mBART](https://huggingface.co/docs/transformers/model_doc/mbart)** (from Facebook) released with the paper [Multilingual Denoising Pre-training for Neural Machine Translation](https://arxiv.org/abs/2001.08210) by Yinhan Liu, Jiatao Gu, Naman Goyal, Xian Li, Sergey Edunov, Marjan Ghazvininejad, Mike Lewis, Luke Zettlemoyer.

1. **[mBART-50](https://huggingface.co/docs/transformers/model_doc/mbart)** (from Facebook) released with the paper [Multilingual Translation with Extensible Multilingual Pretraining and Finetuning](https://arxiv.org/abs/2008.00401) by Yuqing Tang, Chau Tran, Xian Li, Peng-Jen Chen, Naman Goyal, Vishrav Chaudhary, Jiatao Gu, Angela Fan.

diff --git a/README_zh-hans.md b/README_zh-hans.md

index 2843a8eb29a..f3f1a5474c8 100644

--- a/README_zh-hans.md

+++ b/README_zh-hans.md

@@ -302,7 +302,8 @@ conda install -c huggingface transformers

1. **[M-CTC-T](https://huggingface.co/docs/transformers/model_doc/mctct)** (来自 Facebook) 伴随论文 [Pseudo-Labeling For Massively Multilingual Speech Recognition](https://arxiv.org/abs/2111.00161) 由 Loren Lugosch, Tatiana Likhomanenko, Gabriel Synnaeve, and Ronan Collobert 发布。

1. **[M2M100](https://huggingface.co/docs/transformers/model_doc/m2m_100)** (来自 Facebook) 伴随论文 [Beyond English-Centric Multilingual Machine Translation](https://arxiv.org/abs/2010.11125) 由 Angela Fan, Shruti Bhosale, Holger Schwenk, Zhiyi Ma, Ahmed El-Kishky, Siddharth Goyal, Mandeep Baines, Onur Celebi, Guillaume Wenzek, Vishrav Chaudhary, Naman Goyal, Tom Birch, Vitaliy Liptchinsky, Sergey Edunov, Edouard Grave, Michael Auli, Armand Joulin 发布。

1. **[MarianMT](https://huggingface.co/docs/transformers/model_doc/marian)** 用 [OPUS](http://opus.nlpl.eu/) 数据训练的机器翻译模型由 Jörg Tiedemann 发布。[Marian Framework](https://marian-nmt.github.io/) 由微软翻译团队开发。

-1. **[MaskFormer](https://huggingface.co/docs/transformers/model_doc/maskformer)** (from Meta and UIUC) released with the paper [Per-Pixel Classification is Not All You Need for Semantic Segmentation](https://arxiv.org/abs/2107.06278) by Bowen Cheng, Alexander G. Schwing, Alexander Kirillov

+1. **[MarkupLM](https://huggingface.co/docs/transformers/main/model_doc/markuplm)** (来自 Microsoft Research Asia) 伴随论文 [MarkupLM: Pre-training of Text and Markup Language for Visually-rich Document Understanding](https://arxiv.org/abs/2110.08518) 由 Junlong Li, Yiheng Xu, Lei Cui, Furu Wei 发布。

+1. **[MaskFormer](https://huggingface.co/docs/transformers/model_doc/maskformer)** (from Meta and UIUC) released with the paper [Per-Pixel Classification is Not All You Need for Semantic Segmentation](https://arxiv.org/abs/2107.06278) by Bowen Cheng, Alexander G. Schwing, Alexander Kirillov >>>>>>> Fix rebase

1. **[mBART](https://huggingface.co/docs/transformers/model_doc/mbart)** (来自 Facebook) 伴随论文 [Multilingual Denoising Pre-training for Neural Machine Translation](https://arxiv.org/abs/2001.08210) 由 Yinhan Liu, Jiatao Gu, Naman Goyal, Xian Li, Sergey Edunov, Marjan Ghazvininejad, Mike Lewis, Luke Zettlemoyer 发布。

1. **[mBART-50](https://huggingface.co/docs/transformers/model_doc/mbart)** (来自 Facebook) 伴随论文 [Multilingual Translation with Extensible Multilingual Pretraining and Finetuning](https://arxiv.org/abs/2008.00401) 由 Yuqing Tang, Chau Tran, Xian Li, Peng-Jen Chen, Naman Goyal, Vishrav Chaudhary, Jiatao Gu, Angela Fan 发布。

1. **[Megatron-BERT](https://huggingface.co/docs/transformers/model_doc/megatron-bert)** (来自 NVIDIA) 伴随论文 [Megatron-LM: Training Multi-Billion Parameter Language Models Using Model Parallelism](https://arxiv.org/abs/1909.08053) 由 Mohammad Shoeybi, Mostofa Patwary, Raul Puri, Patrick LeGresley, Jared Casper and Bryan Catanzaro 发布。

diff --git a/README_zh-hant.md b/README_zh-hant.md

index 8f74b97e985..43e8a05372c 100644

--- a/README_zh-hant.md

+++ b/README_zh-hant.md

@@ -314,6 +314,7 @@ conda install -c huggingface transformers

1. **[M-CTC-T](https://huggingface.co/docs/transformers/model_doc/mctct)** (from Facebook) released with the paper [Pseudo-Labeling For Massively Multilingual Speech Recognition](https://arxiv.org/abs/2111.00161) by Loren Lugosch, Tatiana Likhomanenko, Gabriel Synnaeve, and Ronan Collobert.

1. **[M2M100](https://huggingface.co/docs/transformers/model_doc/m2m_100)** (from Facebook) released with the paper [Beyond English-Centric Multilingual Machine Translation](https://arxiv.org/abs/2010.11125) by Angela Fan, Shruti Bhosale, Holger Schwenk, Zhiyi Ma, Ahmed El-Kishky, Siddharth Goyal, Mandeep Baines, Onur Celebi, Guillaume Wenzek, Vishrav Chaudhary, Naman Goyal, Tom Birch, Vitaliy Liptchinsky, Sergey Edunov, Edouard Grave, Michael Auli, Armand Joulin.

1. **[MarianMT](https://huggingface.co/docs/transformers/model_doc/marian)** Machine translation models trained using [OPUS](http://opus.nlpl.eu/) data by Jörg Tiedemann. The [Marian Framework](https://marian-nmt.github.io/) is being developed by the Microsoft Translator Team.

+1. **[MarkupLM](https://huggingface.co/docs/transformers/main/model_doc/markuplm)** (from Microsoft Research Asia) released with the paper [MarkupLM: Pre-training of Text and Markup Language for Visually-rich Document Understanding](https://arxiv.org/abs/2110.08518) by Junlong Li, Yiheng Xu, Lei Cui, Furu Wei.

1. **[MaskFormer](https://huggingface.co/docs/transformers/model_doc/maskformer)** (from Meta and UIUC) released with the paper [Per-Pixel Classification is Not All You Need for Semantic Segmentation](https://arxiv.org/abs/2107.06278) by Bowen Cheng, Alexander G. Schwing, Alexander Kirillov

1. **[mBART](https://huggingface.co/docs/transformers/model_doc/mbart)** (from Facebook) released with the paper [Multilingual Denoising Pre-training for Neural Machine Translation](https://arxiv.org/abs/2001.08210) by Yinhan Liu, Jiatao Gu, Naman Goyal, Xian Li, Sergey Edunov, Marjan Ghazvininejad, Mike Lewis, Luke Zettlemoyer.

1. **[mBART-50](https://huggingface.co/docs/transformers/model_doc/mbart)** (from Facebook) released with the paper [Multilingual Translation with Extensible Multilingual Pretraining and Finetuning](https://arxiv.org/abs/2008.00401) by Yuqing Tang, Chau Tran, Xian Li, Peng-Jen Chen, Naman Goyal, Vishrav Chaudhary, Jiatao Gu, Angela Fan.

diff --git a/docs/source/en/_toctree.yml b/docs/source/en/_toctree.yml

index 5e2d25ee3c4..644778e155c 100644

--- a/docs/source/en/_toctree.yml

+++ b/docs/source/en/_toctree.yml

@@ -279,6 +279,8 @@

title: M2M100

- local: model_doc/marian

title: MarianMT

+ - local: model_doc/markuplm

+ title: MarkupLM

- local: model_doc/mbart

title: MBart and MBart-50

- local: model_doc/megatron-bert

diff --git a/docs/source/en/index.mdx b/docs/source/en/index.mdx

index 98a458e11ff..652c5bc77b8 100644

--- a/docs/source/en/index.mdx

+++ b/docs/source/en/index.mdx

@@ -118,6 +118,7 @@ The documentation is organized into five sections:

1. **[M-CTC-T](model_doc/mctct)** (from Facebook) released with the paper [Pseudo-Labeling For Massively Multilingual Speech Recognition](https://arxiv.org/abs/2111.00161) by Loren Lugosch, Tatiana Likhomanenko, Gabriel Synnaeve, and Ronan Collobert.

1. **[M2M100](model_doc/m2m_100)** (from Facebook) released with the paper [Beyond English-Centric Multilingual Machine Translation](https://arxiv.org/abs/2010.11125) by Angela Fan, Shruti Bhosale, Holger Schwenk, Zhiyi Ma, Ahmed El-Kishky, Siddharth Goyal, Mandeep Baines, Onur Celebi, Guillaume Wenzek, Vishrav Chaudhary, Naman Goyal, Tom Birch, Vitaliy Liptchinsky, Sergey Edunov, Edouard Grave, Michael Auli, Armand Joulin.

1. **[MarianMT](model_doc/marian)** Machine translation models trained using [OPUS](http://opus.nlpl.eu/) data by Jörg Tiedemann. The [Marian Framework](https://marian-nmt.github.io/) is being developed by the Microsoft Translator Team.

+1. **[MarkupLM](model_doc/markuplm)** (from Microsoft Research Asia) released with the paper [MarkupLM: Pre-training of Text and Markup Language for Visually-rich Document Understanding](https://arxiv.org/abs/2110.08518) by Junlong Li, Yiheng Xu, Lei Cui, Furu Wei.

1. **[MaskFormer](model_doc/maskformer)** (from Meta and UIUC) released with the paper [Per-Pixel Classification is Not All You Need for Semantic Segmentation](https://arxiv.org/abs/2107.06278) by Bowen Cheng, Alexander G. Schwing, Alexander Kirillov.

1. **[mBART](model_doc/mbart)** (from Facebook) released with the paper [Multilingual Denoising Pre-training for Neural Machine Translation](https://arxiv.org/abs/2001.08210) by Yinhan Liu, Jiatao Gu, Naman Goyal, Xian Li, Sergey Edunov, Marjan Ghazvininejad, Mike Lewis, Luke Zettlemoyer.

1. **[mBART-50](model_doc/mbart)** (from Facebook) released with the paper [Multilingual Translation with Extensible Multilingual Pretraining and Finetuning](https://arxiv.org/abs/2008.00401) by Yuqing Tang, Chau Tran, Xian Li, Peng-Jen Chen, Naman Goyal, Vishrav Chaudhary, Jiatao Gu, Angela Fan.

@@ -264,6 +265,7 @@ Flax), PyTorch, and/or TensorFlow.

| M-CTC-T | ❌ | ❌ | ✅ | ❌ | ❌ |

| M2M100 | ✅ | ❌ | ✅ | ❌ | ❌ |

| Marian | ✅ | ❌ | ✅ | ✅ | ✅ |

+| MarkupLM | ✅ | ✅ | ✅ | ❌ | ❌ |

| MaskFormer | ❌ | ❌ | ✅ | ❌ | ❌ |

| mBART | ✅ | ✅ | ✅ | ✅ | ✅ |

| Megatron-BERT | ❌ | ❌ | ✅ | ❌ | ❌ |

diff --git a/docs/source/en/model_doc/markuplm.mdx b/docs/source/en/model_doc/markuplm.mdx

new file mode 100644

index 00000000000..66ba7a8180d

--- /dev/null

+++ b/docs/source/en/model_doc/markuplm.mdx

@@ -0,0 +1,246 @@

+

+

+# MarkupLM

+

+## Overview

+

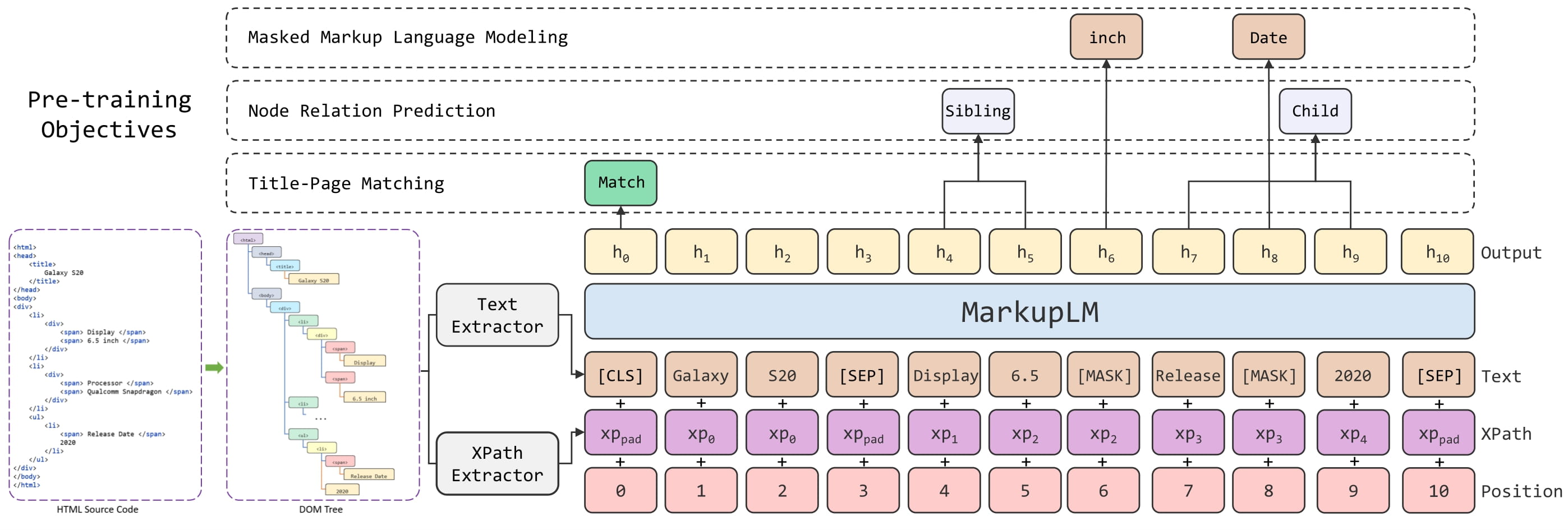

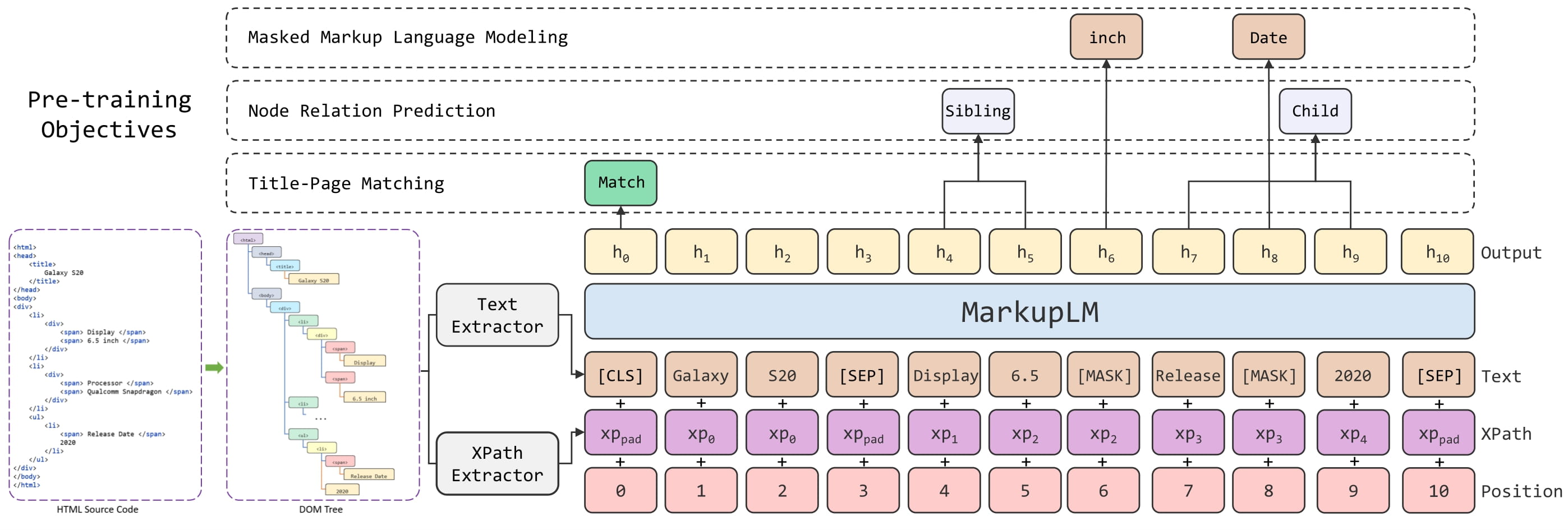

+The MarkupLM model was proposed in [MarkupLM: Pre-training of Text and Markup Language for Visually-rich Document

+Understanding](https://arxiv.org/abs/2110.08518) by Junlong Li, Yiheng Xu, Lei Cui, Furu Wei. MarkupLM is BERT, but

+applied to HTML pages instead of raw text documents. The model incorporates additional embedding layers to improve

+performance, similar to [LayoutLM](layoutlm).

+

+The model can be used for tasks like question answering on web pages or information extraction from web pages. It obtains

+state-of-the-art results on 2 important benchmarks:

+- [WebSRC](https://x-lance.github.io/WebSRC/), a dataset for Web-Based Structual Reading Comprehension (a bit like SQuAD but for web pages)

+- [SWDE](https://www.researchgate.net/publication/221299838_From_one_tree_to_a_forest_a_unified_solution_for_structured_web_data_extraction), a dataset

+for information extraction from web pages (basically named-entity recogntion on web pages)

+

+The abstract from the paper is the following:

+

+*Multimodal pre-training with text, layout, and image has made significant progress for Visually-rich Document

+Understanding (VrDU), especially the fixed-layout documents such as scanned document images. While, there are still a

+large number of digital documents where the layout information is not fixed and needs to be interactively and

+dynamically rendered for visualization, making existing layout-based pre-training approaches not easy to apply. In this

+paper, we propose MarkupLM for document understanding tasks with markup languages as the backbone such as

+HTML/XML-based documents, where text and markup information is jointly pre-trained. Experiment results show that the

+pre-trained MarkupLM significantly outperforms the existing strong baseline models on several document understanding

+tasks. The pre-trained model and code will be publicly available.*

+

+Tips:

+- In addition to `input_ids`, [`~MarkupLMModel.forward`] expects 2 additional inputs, namely `xpath_tags_seq` and `xpath_subs_seq`.

+These are the XPATH tags and subscripts respectively for each token in the input sequence.

+- One can use [`MarkupLMProcessor`] to prepare all data for the model. Refer to the [usage guide](#usage-markuplmprocessor) for more info.

+- Demo notebooks can be found [here](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/MarkupLM).

+

+ +

+ MarkupLM architecture. Taken from the original paper.

+

+This model was contributed by [nielsr](https://huggingface.co/nielsr). The original code can be found [here](https://github.com/microsoft/unilm/tree/master/markuplm).

+

+## Usage: MarkupLMProcessor

+

+The easiest way to prepare data for the model is to use [`MarkupLMProcessor`], which internally combines a feature extractor

+([`MarkupLMFeatureExtractor`]) and a tokenizer ([`MarkupLMTokenizer`] or [`MarkupLMTokenizerFast`]). The feature extractor is

+used to extract all nodes and xpaths from the HTML strings, which are then provided to the tokenizer, which turns them into the

+token-level inputs of the model (`input_ids` etc.). Note that you can still use the feature extractor and tokenizer separately,

+if you only want to handle one of the two tasks.

+

+```python

+from transformers import MarkupLMFeatureExtractor, MarkupLMTokenizerFast, MarkupLMProcessor

+

+feature_extractor = MarkupLMFeatureExtractor()

+tokenizer = MarkupLMTokenizerFast.from_pretrained("microsoft/markuplm-base")

+processor = MarkupLMProcessor(feature_extractor, tokenizer)

+```

+

+In short, one can provide HTML strings (and possibly additional data) to [`MarkupLMProcessor`],

+and it will create the inputs expected by the model. Internally, the processor first uses

+[`MarkupLMFeatureExtractor`] to get a list of nodes and corresponding xpaths. The nodes and

+xpaths are then provided to [`MarkupLMTokenizer`] or [`MarkupLMTokenizerFast`], which converts them

+to token-level `input_ids`, `attention_mask`, `token_type_ids`, `xpath_subs_seq`, `xpath_tags_seq`.

+Optionally, one can provide node labels to the processor, which are turned into token-level `labels`.

+

+[`MarkupLMFeatureExtractor`] uses [Beautiful Soup](https://www.crummy.com/software/BeautifulSoup/bs4/doc/), a Python library for

+pulling data out of HTML and XML files, under the hood. Note that you can still use your own parsing solution of

+choice, and provide the nodes and xpaths yourself to [`MarkupLMTokenizer`] or [`MarkupLMTokenizerFast`].

+

+In total, there are 5 use cases that are supported by the processor. Below, we list them all. Note that each of these

+use cases work for both batched and non-batched inputs (we illustrate them for non-batched inputs).

+

+**Use case 1: web page classification (training, inference) + token classification (inference), parse_html = True**

+

+This is the simplest case, in which the processor will use the feature extractor to get all nodes and xpaths from the HTML.

+

+```python

+>>> from transformers import MarkupLMProcessor

+

+>>> processor = MarkupLMProcessor.from_pretrained("microsoft/markuplm-base")

+

+>>> html_string = """

+...

+...

+...

+... Hello world

+...

+...

+

+...

+

+ MarkupLM architecture. Taken from the original paper.

+

+This model was contributed by [nielsr](https://huggingface.co/nielsr). The original code can be found [here](https://github.com/microsoft/unilm/tree/master/markuplm).

+

+## Usage: MarkupLMProcessor

+

+The easiest way to prepare data for the model is to use [`MarkupLMProcessor`], which internally combines a feature extractor

+([`MarkupLMFeatureExtractor`]) and a tokenizer ([`MarkupLMTokenizer`] or [`MarkupLMTokenizerFast`]). The feature extractor is

+used to extract all nodes and xpaths from the HTML strings, which are then provided to the tokenizer, which turns them into the

+token-level inputs of the model (`input_ids` etc.). Note that you can still use the feature extractor and tokenizer separately,

+if you only want to handle one of the two tasks.

+

+```python

+from transformers import MarkupLMFeatureExtractor, MarkupLMTokenizerFast, MarkupLMProcessor

+

+feature_extractor = MarkupLMFeatureExtractor()

+tokenizer = MarkupLMTokenizerFast.from_pretrained("microsoft/markuplm-base")

+processor = MarkupLMProcessor(feature_extractor, tokenizer)

+```

+

+In short, one can provide HTML strings (and possibly additional data) to [`MarkupLMProcessor`],

+and it will create the inputs expected by the model. Internally, the processor first uses

+[`MarkupLMFeatureExtractor`] to get a list of nodes and corresponding xpaths. The nodes and

+xpaths are then provided to [`MarkupLMTokenizer`] or [`MarkupLMTokenizerFast`], which converts them

+to token-level `input_ids`, `attention_mask`, `token_type_ids`, `xpath_subs_seq`, `xpath_tags_seq`.

+Optionally, one can provide node labels to the processor, which are turned into token-level `labels`.

+

+[`MarkupLMFeatureExtractor`] uses [Beautiful Soup](https://www.crummy.com/software/BeautifulSoup/bs4/doc/), a Python library for

+pulling data out of HTML and XML files, under the hood. Note that you can still use your own parsing solution of

+choice, and provide the nodes and xpaths yourself to [`MarkupLMTokenizer`] or [`MarkupLMTokenizerFast`].

+

+In total, there are 5 use cases that are supported by the processor. Below, we list them all. Note that each of these

+use cases work for both batched and non-batched inputs (we illustrate them for non-batched inputs).

+

+**Use case 1: web page classification (training, inference) + token classification (inference), parse_html = True**

+

+This is the simplest case, in which the processor will use the feature extractor to get all nodes and xpaths from the HTML.

+

+```python

+>>> from transformers import MarkupLMProcessor

+

+>>> processor = MarkupLMProcessor.from_pretrained("microsoft/markuplm-base")

+

+>>> html_string = """

+...

+...

+...

+... Hello world

+...

+...

+

+... Welcome

+... Here is my website.

+

+...

+... """

+

+>>> # note that you can also add provide all tokenizer parameters here such as padding, truncation

+>>> encoding = processor(html_string, return_tensors="pt")

+>>> print(encoding.keys())

+dict_keys(['input_ids', 'token_type_ids', 'attention_mask', 'xpath_tags_seq', 'xpath_subs_seq'])

+```

+

+**Use case 2: web page classification (training, inference) + token classification (inference), parse_html=False**

+

+In case one already has obtained all nodes and xpaths, one doesn't need the feature extractor. In that case, one should

+provide the nodes and corresponding xpaths themselves to the processor, and make sure to set `parse_html` to `False`.

+

+```python

+>>> from transformers import MarkupLMProcessor

+

+>>> processor = MarkupLMProcessor.from_pretrained("microsoft/markuplm-base")

+>>> processor.parse_html = False

+

+>>> nodes = ["hello", "world", "how", "are"]

+>>> xpaths = ["/html/body/div/li[1]/div/span", "/html/body/div/li[1]/div/span", "html/body", "html/body/div"]

+>>> encoding = processor(nodes=nodes, xpaths=xpaths, return_tensors="pt")

+>>> print(encoding.keys())

+dict_keys(['input_ids', 'token_type_ids', 'attention_mask', 'xpath_tags_seq', 'xpath_subs_seq'])

+```

+

+**Use case 3: token classification (training), parse_html=False**

+

+For token classification tasks (such as [SWDE](https://paperswithcode.com/dataset/swde)), one can also provide the

+corresponding node labels in order to train a model. The processor will then convert these into token-level `labels`.

+By default, it will only label the first wordpiece of a word, and label the remaining wordpieces with -100, which is the

+`ignore_index` of PyTorch's CrossEntropyLoss. In case you want all wordpieces of a word to be labeled, you can

+initialize the tokenizer with `only_label_first_subword` set to `False`.

+

+```python

+>>> from transformers import MarkupLMProcessor

+

+>>> processor = MarkupLMProcessor.from_pretrained("microsoft/markuplm-base")

+>>> processor.parse_html = False

+

+>>> nodes = ["hello", "world", "how", "are"]

+>>> xpaths = ["/html/body/div/li[1]/div/span", "/html/body/div/li[1]/div/span", "html/body", "html/body/div"]

+>>> node_labels = [1, 2, 2, 1]

+>>> encoding = processor(nodes=nodes, xpaths=xpaths, node_labels=node_labels, return_tensors="pt")

+>>> print(encoding.keys())

+dict_keys(['input_ids', 'token_type_ids', 'attention_mask', 'xpath_tags_seq', 'xpath_subs_seq', 'labels'])

+```

+

+**Use case 4: web page question answering (inference), parse_html=True**

+

+For question answering tasks on web pages, you can provide a question to the processor. By default, the

+processor will use the feature extractor to get all nodes and xpaths, and create [CLS] question tokens [SEP] word tokens [SEP].

+

+```python

+>>> from transformers import MarkupLMProcessor

+

+>>> processor = MarkupLMProcessor.from_pretrained("microsoft/markuplm-base")

+

+>>> html_string = """

+...

+...

+...

+... Hello world

+...

+...

+

+... Welcome

+... My name is Niels.

+

+...

+... """

+

+>>> question = "What's his name?"

+>>> encoding = processor(html_string, questions=question, return_tensors="pt")

+>>> print(encoding.keys())

+dict_keys(['input_ids', 'token_type_ids', 'attention_mask', 'xpath_tags_seq', 'xpath_subs_seq'])

+```

+

+**Use case 5: web page question answering (inference), apply_ocr=False**

+

+For question answering tasks (such as WebSRC), you can provide a question to the processor. If you have extracted

+all nodes and xpaths yourself, you can provide them directly to the processor. Make sure to set `parse_html` to `False`.

+

+```python

+>>> from transformers import MarkupLMProcessor

+

+>>> processor = MarkupLMProcessor.from_pretrained("microsoft/markuplm-base")

+>>> processor.parse_html = False

+

+>>> nodes = ["hello", "world", "how", "are"]

+>>> xpaths = ["/html/body/div/li[1]/div/span", "/html/body/div/li[1]/div/span", "html/body", "html/body/div"]

+>>> question = "What's his name?"

+>>> encoding = processor(nodes=nodes, xpaths=xpaths, questions=question, return_tensors="pt")

+>>> print(encoding.keys())

+dict_keys(['input_ids', 'token_type_ids', 'attention_mask', 'xpath_tags_seq', 'xpath_subs_seq'])

+```

+

+## MarkupLMConfig

+

+[[autodoc]] MarkupLMConfig

+ - all

+

+## MarkupLMFeatureExtractor

+

+[[autodoc]] MarkupLMFeatureExtractor

+ - __call__

+

+## MarkupLMTokenizer

+

+[[autodoc]] MarkupLMTokenizer

+ - build_inputs_with_special_tokens

+ - get_special_tokens_mask

+ - create_token_type_ids_from_sequences

+ - save_vocabulary

+

+## MarkupLMTokenizerFast

+

+[[autodoc]] MarkupLMTokenizerFast

+ - all

+

+## MarkupLMProcessor

+

+[[autodoc]] MarkupLMProcessor

+ - __call__

+

+## MarkupLMModel

+

+[[autodoc]] MarkupLMModel

+ - forward

+

+## MarkupLMForSequenceClassification

+

+[[autodoc]] MarkupLMForSequenceClassification

+ - forward

+

+## MarkupLMForTokenClassification

+

+[[autodoc]] MarkupLMForTokenClassification

+ - forward

+

+## MarkupLMForQuestionAnswering

+

+[[autodoc]] MarkupLMForQuestionAnswering

+ - forward

\ No newline at end of file

diff --git a/src/transformers/__init__.py b/src/transformers/__init__.py

index fb09d0af9f2..6478bcd7e5b 100755

--- a/src/transformers/__init__.py

+++ b/src/transformers/__init__.py

@@ -262,6 +262,13 @@ _import_structure = {

"models.lxmert": ["LXMERT_PRETRAINED_CONFIG_ARCHIVE_MAP", "LxmertConfig", "LxmertTokenizer"],

"models.m2m_100": ["M2M_100_PRETRAINED_CONFIG_ARCHIVE_MAP", "M2M100Config"],

"models.marian": ["MarianConfig"],

+ "models.markuplm": [

+ "MARKUPLM_PRETRAINED_CONFIG_ARCHIVE_MAP",

+ "MarkupLMConfig",

+ "MarkupLMFeatureExtractor",

+ "MarkupLMProcessor",

+ "MarkupLMTokenizer",

+ ],

"models.maskformer": ["MASKFORMER_PRETRAINED_CONFIG_ARCHIVE_MAP", "MaskFormerConfig"],

"models.mbart": ["MBartConfig"],

"models.mbart50": [],

@@ -570,6 +577,7 @@ else:

_import_structure["models.led"].append("LEDTokenizerFast")

_import_structure["models.longformer"].append("LongformerTokenizerFast")

_import_structure["models.lxmert"].append("LxmertTokenizerFast")

+ _import_structure["models.markuplm"].append("MarkupLMTokenizerFast")

_import_structure["models.mbart"].append("MBartTokenizerFast")

_import_structure["models.mbart50"].append("MBart50TokenizerFast")

_import_structure["models.mobilebert"].append("MobileBertTokenizerFast")

@@ -1488,6 +1496,16 @@ else:

"MaskFormerPreTrainedModel",

]

)

+ _import_structure["models.markuplm"].extend(

+ [

+ "MARKUPLM_PRETRAINED_MODEL_ARCHIVE_LIST",

+ "MarkupLMForQuestionAnswering",

+ "MarkupLMForSequenceClassification",

+ "MarkupLMForTokenClassification",

+ "MarkupLMModel",

+ "MarkupLMPreTrainedModel",

+ ]

+ )

_import_structure["models.mbart"].extend(

[

"MBartForCausalLM",

@@ -3192,6 +3210,13 @@ if TYPE_CHECKING:

from .models.lxmert import LXMERT_PRETRAINED_CONFIG_ARCHIVE_MAP, LxmertConfig, LxmertTokenizer

from .models.m2m_100 import M2M_100_PRETRAINED_CONFIG_ARCHIVE_MAP, M2M100Config

from .models.marian import MarianConfig

+ from .models.markuplm import (

+ MARKUPLM_PRETRAINED_CONFIG_ARCHIVE_MAP,

+ MarkupLMConfig,

+ MarkupLMFeatureExtractor,

+ MarkupLMProcessor,

+ MarkupLMTokenizer,

+ )

from .models.maskformer import MASKFORMER_PRETRAINED_CONFIG_ARCHIVE_MAP, MaskFormerConfig

from .models.mbart import MBartConfig

from .models.mctct import MCTCT_PRETRAINED_CONFIG_ARCHIVE_MAP, MCTCTConfig, MCTCTProcessor

@@ -3465,6 +3490,7 @@ if TYPE_CHECKING:

from .models.led import LEDTokenizerFast

from .models.longformer import LongformerTokenizerFast

from .models.lxmert import LxmertTokenizerFast

+ from .models.markuplm import MarkupLMTokenizerFast

from .models.mbart import MBartTokenizerFast

from .models.mbart50 import MBart50TokenizerFast

from .models.mobilebert import MobileBertTokenizerFast

@@ -4196,6 +4222,14 @@ if TYPE_CHECKING:

M2M100PreTrainedModel,

)

from .models.marian import MarianForCausalLM, MarianModel, MarianMTModel

+ from .models.markuplm import (

+ MARKUPLM_PRETRAINED_MODEL_ARCHIVE_LIST,

+ MarkupLMForQuestionAnswering,

+ MarkupLMForSequenceClassification,

+ MarkupLMForTokenClassification,

+ MarkupLMModel,

+ MarkupLMPreTrainedModel,

+ )

from .models.maskformer import (

MASKFORMER_PRETRAINED_MODEL_ARCHIVE_LIST,

MaskFormerForInstanceSegmentation,

diff --git a/src/transformers/convert_slow_tokenizer.py b/src/transformers/convert_slow_tokenizer.py

index 6fbd7b49b06..ce52ba3b3be 100644

--- a/src/transformers/convert_slow_tokenizer.py

+++ b/src/transformers/convert_slow_tokenizer.py

@@ -1043,6 +1043,44 @@ class XGLMConverter(SpmConverter):

)

+class MarkupLMConverter(Converter):

+ def converted(self) -> Tokenizer:

+ ot = self.original_tokenizer

+ vocab = ot.encoder

+ merges = list(ot.bpe_ranks.keys())

+

+ tokenizer = Tokenizer(

+ BPE(

+ vocab=vocab,

+ merges=merges,

+ dropout=None,

+ continuing_subword_prefix="",

+ end_of_word_suffix="",

+ fuse_unk=False,

+ unk_token=self.original_tokenizer.unk_token,

+ )

+ )

+

+ tokenizer.pre_tokenizer = pre_tokenizers.ByteLevel(add_prefix_space=ot.add_prefix_space)

+ tokenizer.decoder = decoders.ByteLevel()

+

+ cls = str(self.original_tokenizer.cls_token)

+ sep = str(self.original_tokenizer.sep_token)

+ cls_token_id = self.original_tokenizer.cls_token_id

+ sep_token_id = self.original_tokenizer.sep_token_id

+

+ tokenizer.post_processor = processors.TemplateProcessing(

+ single=f"{cls} $A {sep}",

+ pair=f"{cls} $A {sep} $B {sep}",

+ special_tokens=[

+ (cls, cls_token_id),

+ (sep, sep_token_id),

+ ],

+ )

+

+ return tokenizer

+

+

SLOW_TO_FAST_CONVERTERS = {

"AlbertTokenizer": AlbertConverter,

"BartTokenizer": RobertaConverter,

@@ -1072,6 +1110,7 @@ SLOW_TO_FAST_CONVERTERS = {

"LongformerTokenizer": RobertaConverter,

"LEDTokenizer": RobertaConverter,

"LxmertTokenizer": BertConverter,

+ "MarkupLMTokenizer": MarkupLMConverter,

"MBartTokenizer": MBartConverter,

"MBart50Tokenizer": MBart50Converter,

"MPNetTokenizer": MPNetConverter,

diff --git a/src/transformers/file_utils.py b/src/transformers/file_utils.py

index aa3681e057b..87cd9a46918 100644

--- a/src/transformers/file_utils.py

+++ b/src/transformers/file_utils.py

@@ -79,6 +79,7 @@ from .utils import (

has_file,

http_user_agent,

is_apex_available,

+ is_bs4_available,

is_coloredlogs_available,

is_datasets_available,

is_detectron2_available,

diff --git a/src/transformers/models/__init__.py b/src/transformers/models/__init__.py

index 18c21cdf186..261d4c03e23 100644

--- a/src/transformers/models/__init__.py

+++ b/src/transformers/models/__init__.py

@@ -88,6 +88,7 @@ from . import (

lxmert,

m2m_100,

marian,

+ markuplm,

maskformer,

mbart,

mbart50,

diff --git a/src/transformers/models/auto/configuration_auto.py b/src/transformers/models/auto/configuration_auto.py

index 39c48b217ff..781641b74ed 100644

--- a/src/transformers/models/auto/configuration_auto.py

+++ b/src/transformers/models/auto/configuration_auto.py

@@ -90,6 +90,7 @@ CONFIG_MAPPING_NAMES = OrderedDict(

("lxmert", "LxmertConfig"),

("m2m_100", "M2M100Config"),

("marian", "MarianConfig"),

+ ("markuplm", "MarkupLMConfig"),

("maskformer", "MaskFormerConfig"),

("mbart", "MBartConfig"),

("mctct", "MCTCTConfig"),

@@ -221,6 +222,7 @@ CONFIG_ARCHIVE_MAP_MAPPING_NAMES = OrderedDict(

("luke", "LUKE_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("lxmert", "LXMERT_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("m2m_100", "M2M_100_PRETRAINED_CONFIG_ARCHIVE_MAP"),

+ ("markuplm", "MARKUPLM_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("maskformer", "MASKFORMER_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("mbart", "MBART_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("mctct", "MCTCT_PRETRAINED_CONFIG_ARCHIVE_MAP"),

@@ -357,6 +359,7 @@ MODEL_NAMES_MAPPING = OrderedDict(

("lxmert", "LXMERT"),

("m2m_100", "M2M100"),

("marian", "Marian"),

+ ("markuplm", "MarkupLM"),

("maskformer", "MaskFormer"),

("mbart", "mBART"),

("mbart50", "mBART-50"),

diff --git a/src/transformers/models/auto/modeling_auto.py b/src/transformers/models/auto/modeling_auto.py

index 936e9c8bdc4..d703c5b22a6 100644

--- a/src/transformers/models/auto/modeling_auto.py

+++ b/src/transformers/models/auto/modeling_auto.py

@@ -89,6 +89,7 @@ MODEL_MAPPING_NAMES = OrderedDict(

("lxmert", "LxmertModel"),

("m2m_100", "M2M100Model"),

("marian", "MarianModel"),

+ ("markuplm", "MarkupLMModel"),

("maskformer", "MaskFormerModel"),

("mbart", "MBartModel"),

("mctct", "MCTCTModel"),

@@ -247,6 +248,7 @@ MODEL_WITH_LM_HEAD_MAPPING_NAMES = OrderedDict(

("luke", "LukeForMaskedLM"),

("m2m_100", "M2M100ForConditionalGeneration"),

("marian", "MarianMTModel"),

+ ("markuplm", "MarkupLMForMaskedLM"),

("megatron-bert", "MegatronBertForCausalLM"),

("mobilebert", "MobileBertForMaskedLM"),

("mpnet", "MPNetForMaskedLM"),

@@ -530,6 +532,7 @@ MODEL_FOR_SEQUENCE_CLASSIFICATION_MAPPING_NAMES = OrderedDict(

("led", "LEDForSequenceClassification"),

("longformer", "LongformerForSequenceClassification"),

("luke", "LukeForSequenceClassification"),

+ ("markuplm", "MarkupLMForSequenceClassification"),

("mbart", "MBartForSequenceClassification"),

("megatron-bert", "MegatronBertForSequenceClassification"),

("mobilebert", "MobileBertForSequenceClassification"),

@@ -585,6 +588,7 @@ MODEL_FOR_QUESTION_ANSWERING_MAPPING_NAMES = OrderedDict(

("longformer", "LongformerForQuestionAnswering"),

("luke", "LukeForQuestionAnswering"),

("lxmert", "LxmertForQuestionAnswering"),

+ ("markuplm", "MarkupLMForQuestionAnswering"),

("mbart", "MBartForQuestionAnswering"),

("megatron-bert", "MegatronBertForQuestionAnswering"),

("mobilebert", "MobileBertForQuestionAnswering"),

@@ -654,6 +658,7 @@ MODEL_FOR_TOKEN_CLASSIFICATION_MAPPING_NAMES = OrderedDict(

("layoutlmv3", "LayoutLMv3ForTokenClassification"),

("longformer", "LongformerForTokenClassification"),

("luke", "LukeForTokenClassification"),

+ ("markuplm", "MarkupLMForTokenClassification"),

("megatron-bert", "MegatronBertForTokenClassification"),

("mobilebert", "MobileBertForTokenClassification"),

("mpnet", "MPNetForTokenClassification"),

diff --git a/src/transformers/models/auto/processing_auto.py b/src/transformers/models/auto/processing_auto.py

index 07b2811a164..9885cae95e8 100644

--- a/src/transformers/models/auto/processing_auto.py

+++ b/src/transformers/models/auto/processing_auto.py

@@ -46,6 +46,7 @@ PROCESSOR_MAPPING_NAMES = OrderedDict(

("layoutlmv2", "LayoutLMv2Processor"),

("layoutlmv3", "LayoutLMv3Processor"),

("layoutxlm", "LayoutXLMProcessor"),

+ ("markuplm", "MarkupLMProcessor"),

("owlvit", "OwlViTProcessor"),

("sew", "Wav2Vec2Processor"),

("sew-d", "Wav2Vec2Processor"),

diff --git a/src/transformers/models/markuplm/__init__.py b/src/transformers/models/markuplm/__init__.py

new file mode 100644

index 00000000000..9d81b9ad369

--- /dev/null

+++ b/src/transformers/models/markuplm/__init__.py

@@ -0,0 +1,88 @@

+# flake8: noqa

+# There's no way to ignore "F401 '...' imported but unused" warnings in this

+# module, but to preserve other warnings. So, don't check this module at all.

+

+# Copyright 2022 The HuggingFace Team. All rights reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+from typing import TYPE_CHECKING

+

+# rely on isort to merge the imports

+from ...utils import OptionalDependencyNotAvailable, _LazyModule, is_tokenizers_available, is_torch_available

+

+

+_import_structure = {

+ "configuration_markuplm": ["MARKUPLM_PRETRAINED_CONFIG_ARCHIVE_MAP", "MarkupLMConfig"],

+ "feature_extraction_markuplm": ["MarkupLMFeatureExtractor"],

+ "processing_markuplm": ["MarkupLMProcessor"],

+ "tokenization_markuplm": ["MarkupLMTokenizer"],

+}

+

+try:

+ if not is_tokenizers_available():

+ raise OptionalDependencyNotAvailable()

+except OptionalDependencyNotAvailable:

+ pass

+else:

+ _import_structure["tokenization_markuplm_fast"] = ["MarkupLMTokenizerFast"]

+

+try:

+ if not is_torch_available():

+ raise OptionalDependencyNotAvailable()

+except OptionalDependencyNotAvailable:

+ pass

+else:

+ _import_structure["modeling_markuplm"] = [

+ "MARKUPLM_PRETRAINED_MODEL_ARCHIVE_LIST",

+ "MarkupLMForQuestionAnswering",

+ "MarkupLMForSequenceClassification",

+ "MarkupLMForTokenClassification",

+ "MarkupLMModel",

+ "MarkupLMPreTrainedModel",

+ ]

+

+

+if TYPE_CHECKING:

+ from .configuration_markuplm import MARKUPLM_PRETRAINED_CONFIG_ARCHIVE_MAP, MarkupLMConfig

+ from .feature_extraction_markuplm import MarkupLMFeatureExtractor

+ from .processing_markuplm import MarkupLMProcessor

+ from .tokenization_markuplm import MarkupLMTokenizer

+

+ try:

+ if not is_tokenizers_available():

+ raise OptionalDependencyNotAvailable()

+ except OptionalDependencyNotAvailable:

+ pass

+ else:

+ from .tokenization_markuplm_fast import MarkupLMTokenizerFast

+

+ try:

+ if not is_torch_available():

+ raise OptionalDependencyNotAvailable()

+ except OptionalDependencyNotAvailable:

+ pass

+ else:

+ from .modeling_markuplm import (

+ MARKUPLM_PRETRAINED_MODEL_ARCHIVE_LIST,

+ MarkupLMForQuestionAnswering,

+ MarkupLMForSequenceClassification,

+ MarkupLMForTokenClassification,

+ MarkupLMModel,

+ MarkupLMPreTrainedModel,

+ )

+

+

+else:

+ import sys

+

+ sys.modules[__name__] = _LazyModule(__name__, globals()["__file__"], _import_structure)

diff --git a/src/transformers/models/markuplm/configuration_markuplm.py b/src/transformers/models/markuplm/configuration_markuplm.py

new file mode 100644

index 00000000000..a7676d7db4b

--- /dev/null

+++ b/src/transformers/models/markuplm/configuration_markuplm.py

@@ -0,0 +1,151 @@

+# coding=utf-8

+# Copyright 2021, The Microsoft Research Asia MarkupLM Team authors

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+""" MarkupLM model configuration"""

+

+from transformers.models.roberta.configuration_roberta import RobertaConfig

+from transformers.utils import logging

+

+

+logger = logging.get_logger(__name__)

+

+MARKUPLM_PRETRAINED_CONFIG_ARCHIVE_MAP = {

+ "microsoft/markuplm-base": "https://huggingface.co/microsoft/markuplm-base/resolve/main/config.json",

+ "microsoft/markuplm-large": "https://huggingface.co/microsoft/markuplm-large/resolve/main/config.json",

+}

+

+

+class MarkupLMConfig(RobertaConfig):

+ r"""

+ This is the configuration class to store the configuration of a [`MarkupLMModel`]. It is used to instantiate a

+ MarkupLM model according to the specified arguments, defining the model architecture. Instantiating a configuration

+ with the defaults will yield a similar configuration to that of the MarkupLM

+ [microsoft/markuplm-base-uncased](https://huggingface.co/microsoft/markuplm-base-uncased) architecture.

+

+ Configuration objects inherit from [`BertConfig`] and can be used to control the model outputs. Read the

+ documentation from [`BertConfig`] for more information.

+

+ Args:

+ vocab_size (`int`, *optional*, defaults to 30522):

+ Vocabulary size of the MarkupLM model. Defines the different tokens that can be represented by the

+ *inputs_ids* passed to the forward method of [`MarkupLMModel`].

+ hidden_size (`int`, *optional*, defaults to 768):

+ Dimensionality of the encoder layers and the pooler layer.

+ num_hidden_layers (`int`, *optional*, defaults to 12):

+ Number of hidden layers in the Transformer encoder.

+ num_attention_heads (`int`, *optional*, defaults to 12):

+ Number of attention heads for each attention layer in the Transformer encoder.

+ intermediate_size (`int`, *optional*, defaults to 3072):

+ Dimensionality of the "intermediate" (i.e., feed-forward) layer in the Transformer encoder.

+ hidden_act (`str` or `function`, *optional*, defaults to `"gelu"`):

+ The non-linear activation function (function or string) in the encoder and pooler. If string, `"gelu"`,

+ `"relu"`, `"silu"` and `"gelu_new"` are supported.

+ hidden_dropout_prob (`float`, *optional*, defaults to 0.1):

+ The dropout probability for all fully connected layers in the embeddings, encoder, and pooler.

+ attention_probs_dropout_prob (`float`, *optional*, defaults to 0.1):

+ The dropout ratio for the attention probabilities.

+ max_position_embeddings (`int`, *optional*, defaults to 512):

+ The maximum sequence length that this model might ever be used with. Typically set this to something large

+ just in case (e.g., 512 or 1024 or 2048).

+ type_vocab_size (`int`, *optional*, defaults to 2):

+ The vocabulary size of the `token_type_ids` passed into [`MarkupLMModel`].

+ initializer_range (`float`, *optional*, defaults to 0.02):

+ The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

+ layer_norm_eps (`float`, *optional*, defaults to 1e-12):

+ The epsilon used by the layer normalization layers.

+ gradient_checkpointing (`bool`, *optional*, defaults to `False`):

+ If True, use gradient checkpointing to save memory at the expense of slower backward pass.

+ max_tree_id_unit_embeddings (`int`, *optional*, defaults to 1024):

+ The maximum value that the tree id unit embedding might ever use. Typically set this to something large

+ just in case (e.g., 1024).

+ max_xpath_tag_unit_embeddings (`int`, *optional*, defaults to 256):

+ The maximum value that the xpath tag unit embedding might ever use. Typically set this to something large

+ just in case (e.g., 256).

+ max_xpath_subs_unit_embeddings (`int`, *optional*, defaults to 1024):

+ The maximum value that the xpath subscript unit embedding might ever use. Typically set this to something

+ large just in case (e.g., 1024).

+ tag_pad_id (`int`, *optional*, defaults to 216):

+ The id of the padding token in the xpath tags.

+ subs_pad_id (`int`, *optional*, defaults to 1001):

+ The id of the padding token in the xpath subscripts.

+ xpath_tag_unit_hidden_size (`int`, *optional*, defaults to 32):

+ The hidden size of each tree id unit. One complete tree index will have

+ (50*xpath_tag_unit_hidden_size)-dim.

+ max_depth (`int`, *optional*, defaults to 50):

+ The maximum depth in xpath.

+

+ Examples:

+

+ ```python

+ >>> from transformers import MarkupLMModel, MarkupLMConfig

+

+ >>> # Initializing a MarkupLM microsoft/markuplm-base style configuration

+ >>> configuration = MarkupLMConfig()

+

+ >>> # Initializing a model from the microsoft/markuplm-base style configuration

+ >>> model = MarkupLMModel(configuration)

+

+ >>> # Accessing the model configuration

+ >>> configuration = model.config

+ ```"""

+ model_type = "markuplm"

+

+ def __init__(

+ self,

+ vocab_size=30522,

+ hidden_size=768,

+ num_hidden_layers=12,

+ num_attention_heads=12,

+ intermediate_size=3072,

+ hidden_act="gelu",

+ hidden_dropout_prob=0.1,

+ attention_probs_dropout_prob=0.1,

+ max_position_embeddings=512,

+ type_vocab_size=2,

+ initializer_range=0.02,

+ layer_norm_eps=1e-12,

+ pad_token_id=0,

+ gradient_checkpointing=False,

+ max_xpath_tag_unit_embeddings=256,

+ max_xpath_subs_unit_embeddings=1024,

+ tag_pad_id=216,

+ subs_pad_id=1001,

+ xpath_unit_hidden_size=32,

+ max_depth=50,

+ **kwargs

+ ):

+ super().__init__(

+ vocab_size=vocab_size,

+ hidden_size=hidden_size,

+ num_hidden_layers=num_hidden_layers,

+ num_attention_heads=num_attention_heads,

+ intermediate_size=intermediate_size,

+ hidden_act=hidden_act,

+ hidden_dropout_prob=hidden_dropout_prob,

+ attention_probs_dropout_prob=attention_probs_dropout_prob,

+ max_position_embeddings=max_position_embeddings,

+ type_vocab_size=type_vocab_size,

+ initializer_range=initializer_range,

+ layer_norm_eps=layer_norm_eps,

+ pad_token_id=pad_token_id,

+ gradient_checkpointing=gradient_checkpointing,

+ **kwargs,

+ )

+ # additional properties

+ self.max_depth = max_depth

+ self.max_xpath_tag_unit_embeddings = max_xpath_tag_unit_embeddings

+ self.max_xpath_subs_unit_embeddings = max_xpath_subs_unit_embeddings

+ self.tag_pad_id = tag_pad_id

+ self.subs_pad_id = subs_pad_id

+ self.xpath_unit_hidden_size = xpath_unit_hidden_size

diff --git a/src/transformers/models/markuplm/feature_extraction_markuplm.py b/src/transformers/models/markuplm/feature_extraction_markuplm.py

new file mode 100644

index 00000000000..b20349fafb0

--- /dev/null

+++ b/src/transformers/models/markuplm/feature_extraction_markuplm.py

@@ -0,0 +1,183 @@

+# coding=utf-8

+# Copyright 2022 The HuggingFace Inc. team.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+"""

+Feature extractor class for MarkupLM.

+"""

+

+import html

+

+from ...feature_extraction_utils import BatchFeature, FeatureExtractionMixin

+from ...utils import is_bs4_available, logging, requires_backends

+

+

+if is_bs4_available():

+ import bs4

+ from bs4 import BeautifulSoup

+

+

+logger = logging.get_logger(__name__)

+

+

+class MarkupLMFeatureExtractor(FeatureExtractionMixin):

+ r"""

+ Constructs a MarkupLM feature extractor. This can be used to get a list of nodes and corresponding xpaths from HTML

+ strings.

+

+ This feature extractor inherits from [`~feature_extraction_utils.PreTrainedFeatureExtractor`] which contains most

+ of the main methods. Users should refer to this superclass for more information regarding those methods.

+

+ """

+

+ def __init__(self, **kwargs):

+ requires_backends(self, ["bs4"])

+ super().__init__(**kwargs)

+

+ def xpath_soup(self, element):

+ xpath_tags = []

+ xpath_subscripts = []

+ child = element if element.name else element.parent

+ for parent in child.parents: # type: bs4.element.Tag

+ siblings = parent.find_all(child.name, recursive=False)

+ xpath_tags.append(child.name)

+ xpath_subscripts.append(

+ 0 if 1 == len(siblings) else next(i for i, s in enumerate(siblings, 1) if s is child)

+ )

+ child = parent

+ xpath_tags.reverse()

+ xpath_subscripts.reverse()

+ return xpath_tags, xpath_subscripts

+

+ def get_three_from_single(self, html_string):

+ html_code = BeautifulSoup(html_string, "html.parser")

+

+ all_doc_strings = []

+ string2xtag_seq = []

+ string2xsubs_seq = []

+

+ for element in html_code.descendants:

+ if type(element) == bs4.element.NavigableString:

+ if type(element.parent) != bs4.element.Tag:

+ continue

+

+ text_in_this_tag = html.unescape(element).strip()

+ if not text_in_this_tag:

+ continue

+

+ all_doc_strings.append(text_in_this_tag)

+

+ xpath_tags, xpath_subscripts = self.xpath_soup(element)

+ string2xtag_seq.append(xpath_tags)

+ string2xsubs_seq.append(xpath_subscripts)

+

+ if len(all_doc_strings) != len(string2xtag_seq):

+ raise ValueError("Number of doc strings and xtags does not correspond")

+ if len(all_doc_strings) != len(string2xsubs_seq):

+ raise ValueError("Number of doc strings and xsubs does not correspond")

+

+ return all_doc_strings, string2xtag_seq, string2xsubs_seq

+

+ def construct_xpath(self, xpath_tags, xpath_subscripts):

+ xpath = ""

+ for tagname, subs in zip(xpath_tags, xpath_subscripts):

+ xpath += f"/{tagname}"

+ if subs != 0:

+ xpath += f"[{subs}]"

+ return xpath

+

+ def __call__(self, html_strings) -> BatchFeature:

+ """

+ Main method to prepare for the model one or several HTML strings.

+

+ Args:

+ html_strings (`str`, `List[str]`):

+ The HTML string or batch of HTML strings from which to extract nodes and corresponding xpaths.

+

+ Returns:

+ [`BatchFeature`]: A [`BatchFeature`] with the following fields:

+

+ - **nodes** -- Nodes.

+ - **xpaths** -- Corresponding xpaths.

+

+ Examples:

+

+ ```python

+ >>> from transformers import MarkupLMFeatureExtractor

+

+ >>> page_name_1 = "page1.html"

+ >>> page_name_2 = "page2.html"

+ >>> page_name_3 = "page3.html"

+

+ >>> with open(page_name_1) as f:

+ ... single_html_string = f.read()

+

+ >>> feature_extractor = MarkupLMFeatureExtractor()

+

+ >>> # single example

+ >>> encoding = feature_extractor(single_html_string)

+ >>> print(encoding.keys())

+ >>> # dict_keys(['nodes', 'xpaths'])

+

+ >>> # batched example

+

+ >>> multi_html_strings = []

+

+ >>> with open(page_name_2) as f:

+ ... multi_html_strings.append(f.read())

+ >>> with open(page_name_3) as f:

+ ... multi_html_strings.append(f.read())

+

+ >>> encoding = feature_extractor(multi_html_strings)

+ >>> print(encoding.keys())

+ >>> # dict_keys(['nodes', 'xpaths'])

+ ```"""

+

+ # Input type checking for clearer error

+ valid_strings = False

+

+ # Check that strings has a valid type

+ if isinstance(html_strings, str):

+ valid_strings = True

+ elif isinstance(html_strings, (list, tuple)):

+ if len(html_strings) == 0 or isinstance(html_strings[0], str):

+ valid_strings = True

+

+ if not valid_strings:

+ raise ValueError(

+ "HTML strings must of type `str`, `List[str]` (batch of examples), "

+ f"but is of type {type(html_strings)}."

+ )

+

+ is_batched = bool(isinstance(html_strings, (list, tuple)) and (isinstance(html_strings[0], str)))

+

+ if not is_batched:

+ html_strings = [html_strings]

+

+ # Get nodes + xpaths

+ nodes = []

+ xpaths = []

+ for html_string in html_strings:

+ all_doc_strings, string2xtag_seq, string2xsubs_seq = self.get_three_from_single(html_string)

+ nodes.append(all_doc_strings)

+ xpath_strings = []

+ for node, tag_list, sub_list in zip(all_doc_strings, string2xtag_seq, string2xsubs_seq):

+ xpath_string = self.construct_xpath(tag_list, sub_list)

+ xpath_strings.append(xpath_string)

+ xpaths.append(xpath_strings)

+

+ # return as Dict

+ data = {"nodes": nodes, "xpaths": xpaths}

+ encoded_inputs = BatchFeature(data=data, tensor_type=None)

+

+ return encoded_inputs

diff --git a/src/transformers/models/markuplm/modeling_markuplm.py b/src/transformers/models/markuplm/modeling_markuplm.py

new file mode 100755

index 00000000000..0a8e9050142

--- /dev/null

+++ b/src/transformers/models/markuplm/modeling_markuplm.py

@@ -0,0 +1,1300 @@

+# coding=utf-8

+# Copyright 2022 Microsoft Research Asia and the HuggingFace Inc. team.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+""" PyTorch MarkupLM model."""

+

+import math

+import os

+from typing import Optional, Tuple, Union

+

+import torch

+import torch.utils.checkpoint

+from torch import nn

+from torch.nn import BCEWithLogitsLoss, CrossEntropyLoss, MSELoss

+

+from transformers.activations import ACT2FN

+from transformers.file_utils import (

+ add_start_docstrings,

+ add_start_docstrings_to_model_forward,

+ replace_return_docstrings,

+)

+from transformers.modeling_outputs import (

+ BaseModelOutputWithPastAndCrossAttentions,

+ BaseModelOutputWithPoolingAndCrossAttentions,

+ MaskedLMOutput,

+ QuestionAnsweringModelOutput,

+ SequenceClassifierOutput,

+ TokenClassifierOutput,

+)

+from transformers.modeling_utils import (

+ PreTrainedModel,

+ apply_chunking_to_forward,

+ find_pruneable_heads_and_indices,

+ prune_linear_layer,

+)

+from transformers.utils import logging

+

+from .configuration_markuplm import MarkupLMConfig

+

+

+logger = logging.get_logger(__name__)

+

+_CHECKPOINT_FOR_DOC = "microsoft/markuplm-base"

+_CONFIG_FOR_DOC = "MarkupLMConfig"

+_TOKENIZER_FOR_DOC = "MarkupLMTokenizer"

+

+MARKUPLM_PRETRAINED_MODEL_ARCHIVE_LIST = [

+ "microsoft/markuplm-base",

+ "microsoft/markuplm-large",

+]

+

+

+class XPathEmbeddings(nn.Module):

+ """Construct the embeddings from xpath tags and subscripts.

+

+ We drop tree-id in this version, as its info can be covered by xpath.

+ """

+

+ def __init__(self, config):

+ super(XPathEmbeddings, self).__init__()

+ self.max_depth = config.max_depth

+

+ self.xpath_unitseq2_embeddings = nn.Linear(config.xpath_unit_hidden_size * self.max_depth, config.hidden_size)

+

+ self.dropout = nn.Dropout(config.hidden_dropout_prob)

+

+ self.activation = nn.ReLU()

+ self.xpath_unitseq2_inner = nn.Linear(config.xpath_unit_hidden_size * self.max_depth, 4 * config.hidden_size)

+ self.inner2emb = nn.Linear(4 * config.hidden_size, config.hidden_size)

+

+ self.xpath_tag_sub_embeddings = nn.ModuleList(

+ [

+ nn.Embedding(config.max_xpath_tag_unit_embeddings, config.xpath_unit_hidden_size)

+ for _ in range(self.max_depth)

+ ]

+ )

+

+ self.xpath_subs_sub_embeddings = nn.ModuleList(

+ [

+ nn.Embedding(config.max_xpath_subs_unit_embeddings, config.xpath_unit_hidden_size)

+ for _ in range(self.max_depth)

+ ]

+ )

+

+ def forward(self, xpath_tags_seq=None, xpath_subs_seq=None):

+ xpath_tags_embeddings = []

+ xpath_subs_embeddings = []

+

+ for i in range(self.max_depth):

+ xpath_tags_embeddings.append(self.xpath_tag_sub_embeddings[i](xpath_tags_seq[:, :, i]))

+ xpath_subs_embeddings.append(self.xpath_subs_sub_embeddings[i](xpath_subs_seq[:, :, i]))

+

+ xpath_tags_embeddings = torch.cat(xpath_tags_embeddings, dim=-1)

+ xpath_subs_embeddings = torch.cat(xpath_subs_embeddings, dim=-1)

+

+ xpath_embeddings = xpath_tags_embeddings + xpath_subs_embeddings

+

+ xpath_embeddings = self.inner2emb(self.dropout(self.activation(self.xpath_unitseq2_inner(xpath_embeddings))))

+

+ return xpath_embeddings

+

+

+# Copied from transformers.models.roberta.modeling_roberta.create_position_ids_from_input_ids

+def create_position_ids_from_input_ids(input_ids, padding_idx, past_key_values_length=0):

+ """

+ Replace non-padding symbols with their position numbers. Position numbers begin at padding_idx+1. Padding symbols

+ are ignored. This is modified from fairseq's `utils.make_positions`.

+

+ Args:

+ x: torch.Tensor x:

+

+ Returns: torch.Tensor

+ """

+ # The series of casts and type-conversions here are carefully balanced to both work with ONNX export and XLA.

+ mask = input_ids.ne(padding_idx).int()

+ incremental_indices = (torch.cumsum(mask, dim=1).type_as(mask) + past_key_values_length) * mask

+ return incremental_indices.long() + padding_idx

+

+

+class MarkupLMEmbeddings(nn.Module):

+ """Construct the embeddings from word, position and token_type embeddings."""

+

+ def __init__(self, config):

+ super(MarkupLMEmbeddings, self).__init__()

+ self.config = config

+ self.word_embeddings = nn.Embedding(config.vocab_size, config.hidden_size, padding_idx=config.pad_token_id)

+ self.position_embeddings = nn.Embedding(config.max_position_embeddings, config.hidden_size)

+

+ self.max_depth = config.max_depth

+

+ self.xpath_embeddings = XPathEmbeddings(config)

+

+ self.token_type_embeddings = nn.Embedding(config.type_vocab_size, config.hidden_size)

+

+ self.LayerNorm = nn.LayerNorm(config.hidden_size, eps=config.layer_norm_eps)

+ self.dropout = nn.Dropout(config.hidden_dropout_prob)

+

+ self.register_buffer("position_ids", torch.arange(config.max_position_embeddings).expand((1, -1)))

+

+ self.padding_idx = config.pad_token_id

+ self.position_embeddings = nn.Embedding(

+ config.max_position_embeddings, config.hidden_size, padding_idx=self.padding_idx

+ )

+

+ # Copied from transformers.models.roberta.modeling_roberta.RobertaEmbeddings.create_position_ids_from_inputs_embeds

+ def create_position_ids_from_inputs_embeds(self, inputs_embeds):

+ """

+ We are provided embeddings directly. We cannot infer which are padded so just generate sequential position ids.

+

+ Args:

+ inputs_embeds: torch.Tensor

+

+ Returns: torch.Tensor

+ """

+ input_shape = inputs_embeds.size()[:-1]

+ sequence_length = input_shape[1]

+

+ position_ids = torch.arange(

+ self.padding_idx + 1, sequence_length + self.padding_idx + 1, dtype=torch.long, device=inputs_embeds.device

+ )

+ return position_ids.unsqueeze(0).expand(input_shape)

+

+ def forward(

+ self,

+ input_ids=None,

+ xpath_tags_seq=None,

+ xpath_subs_seq=None,

+ token_type_ids=None,

+ position_ids=None,

+ inputs_embeds=None,

+ past_key_values_length=0,

+ ):

+ if input_ids is not None:

+ input_shape = input_ids.size()

+ else:

+ input_shape = inputs_embeds.size()[:-1]

+

+ device = input_ids.device if input_ids is not None else inputs_embeds.device

+

+ if position_ids is None:

+ if input_ids is not None:

+ # Create the position ids from the input token ids. Any padded tokens remain padded.

+ position_ids = create_position_ids_from_input_ids(input_ids, self.padding_idx, past_key_values_length)

+ else:

+ position_ids = self.create_position_ids_from_inputs_embeds(inputs_embeds)

+

+ if token_type_ids is None:

+ token_type_ids = torch.zeros(input_shape, dtype=torch.long, device=device)

+

+ if inputs_embeds is None:

+ inputs_embeds = self.word_embeddings(input_ids)

+

+ # prepare xpath seq

+ if xpath_tags_seq is None:

+ xpath_tags_seq = self.config.tag_pad_id * torch.ones(

+ tuple(list(input_shape) + [self.max_depth]), dtype=torch.long, device=device

+ )

+ if xpath_subs_seq is None:

+ xpath_subs_seq = self.config.subs_pad_id * torch.ones(

+ tuple(list(input_shape) + [self.max_depth]), dtype=torch.long, device=device

+ )

+

+ words_embeddings = inputs_embeds

+ position_embeddings = self.position_embeddings(position_ids)

+

+ token_type_embeddings = self.token_type_embeddings(token_type_ids)

+

+ xpath_embeddings = self.xpath_embeddings(xpath_tags_seq, xpath_subs_seq)

+ embeddings = words_embeddings + position_embeddings + token_type_embeddings + xpath_embeddings

+

+ embeddings = self.LayerNorm(embeddings)

+ embeddings = self.dropout(embeddings)

+ return embeddings

+

+

+# Copied from transformers.models.bert.modeling_bert.BertSelfOutput with Bert->MarkupLM

+class MarkupLMSelfOutput(nn.Module):

+ def __init__(self, config):

+ super().__init__()

+ self.dense = nn.Linear(config.hidden_size, config.hidden_size)

+ self.LayerNorm = nn.LayerNorm(config.hidden_size, eps=config.layer_norm_eps)

+ self.dropout = nn.Dropout(config.hidden_dropout_prob)

+

+ def forward(self, hidden_states: torch.Tensor, input_tensor: torch.Tensor) -> torch.Tensor:

+ hidden_states = self.dense(hidden_states)

+ hidden_states = self.dropout(hidden_states)

+ hidden_states = self.LayerNorm(hidden_states + input_tensor)

+ return hidden_states

+

+

+# Copied from transformers.models.bert.modeling_bert.BertIntermediate

+class MarkupLMIntermediate(nn.Module):

+ def __init__(self, config):

+ super().__init__()

+ self.dense = nn.Linear(config.hidden_size, config.intermediate_size)

+ if isinstance(config.hidden_act, str):

+ self.intermediate_act_fn = ACT2FN[config.hidden_act]

+ else:

+ self.intermediate_act_fn = config.hidden_act

+

+ def forward(self, hidden_states: torch.Tensor) -> torch.Tensor:

+ hidden_states = self.dense(hidden_states)

+ hidden_states = self.intermediate_act_fn(hidden_states)

+ return hidden_states

+

+

+# Copied from transformers.models.bert.modeling_bert.BertOutput with Bert->MarkupLM

+class MarkupLMOutput(nn.Module):

+ def __init__(self, config):

+ super().__init__()

+ self.dense = nn.Linear(config.intermediate_size, config.hidden_size)

+ self.LayerNorm = nn.LayerNorm(config.hidden_size, eps=config.layer_norm_eps)

+ self.dropout = nn.Dropout(config.hidden_dropout_prob)

+

+ def forward(self, hidden_states: torch.Tensor, input_tensor: torch.Tensor) -> torch.Tensor:

+ hidden_states = self.dense(hidden_states)

+ hidden_states = self.dropout(hidden_states)

+ hidden_states = self.LayerNorm(hidden_states + input_tensor)

+ return hidden_states

+

+

+# Copied from transformers.models.bert.modeling_bert.BertPooler

+class MarkupLMPooler(nn.Module):

+ def __init__(self, config):

+ super().__init__()

+ self.dense = nn.Linear(config.hidden_size, config.hidden_size)

+ self.activation = nn.Tanh()

+

+ def forward(self, hidden_states: torch.Tensor) -> torch.Tensor:

+ # We "pool" the model by simply taking the hidden state corresponding

+ # to the first token.

+ first_token_tensor = hidden_states[:, 0]

+ pooled_output = self.dense(first_token_tensor)

+ pooled_output = self.activation(pooled_output)

+ return pooled_output

+

+

+# Copied from transformers.models.bert.modeling_bert.BertPredictionHeadTransform with Bert->MarkupLM

+class MarkupLMPredictionHeadTransform(nn.Module):

+ def __init__(self, config):

+ super().__init__()

+ self.dense = nn.Linear(config.hidden_size, config.hidden_size)

+ if isinstance(config.hidden_act, str):

+ self.transform_act_fn = ACT2FN[config.hidden_act]

+ else:

+ self.transform_act_fn = config.hidden_act

+ self.LayerNorm = nn.LayerNorm(config.hidden_size, eps=config.layer_norm_eps)

+

+ def forward(self, hidden_states: torch.Tensor) -> torch.Tensor:

+ hidden_states = self.dense(hidden_states)

+ hidden_states = self.transform_act_fn(hidden_states)

+ hidden_states = self.LayerNorm(hidden_states)

+ return hidden_states

+

+

+# Copied from transformers.models.bert.modeling_bert.BertLMPredictionHead with Bert->MarkupLM

+class MarkupLMLMPredictionHead(nn.Module):

+ def __init__(self, config):

+ super().__init__()

+ self.transform = MarkupLMPredictionHeadTransform(config)

+

+ # The output weights are the same as the input embeddings, but there is

+ # an output-only bias for each token.

+ self.decoder = nn.Linear(config.hidden_size, config.vocab_size, bias=False)

+

+ self.bias = nn.Parameter(torch.zeros(config.vocab_size))

+

+ # Need a link between the two variables so that the bias is correctly resized with `resize_token_embeddings`

+ self.decoder.bias = self.bias

+

+ def forward(self, hidden_states):

+ hidden_states = self.transform(hidden_states)

+ hidden_states = self.decoder(hidden_states)

+ return hidden_states

+

+

+# Copied from transformers.models.bert.modeling_bert.BertOnlyMLMHead with Bert->MarkupLM

+class MarkupLMOnlyMLMHead(nn.Module):

+ def __init__(self, config):

+ super().__init__()

+ self.predictions = MarkupLMLMPredictionHead(config)

+

+ def forward(self, sequence_output: torch.Tensor) -> torch.Tensor:

+ prediction_scores = self.predictions(sequence_output)

+ return prediction_scores

+

+

+# Copied from transformers.models.bert.modeling_bert.BertSelfAttention with Bert->MarkupLM

+class MarkupLMSelfAttention(nn.Module):

+ def __init__(self, config, position_embedding_type=None):

+ super().__init__()

+ if config.hidden_size % config.num_attention_heads != 0 and not hasattr(config, "embedding_size"):

+ raise ValueError(

+ f"The hidden size ({config.hidden_size}) is not a multiple of the number of attention "

+ f"heads ({config.num_attention_heads})"

+ )

+

+ self.num_attention_heads = config.num_attention_heads

+ self.attention_head_size = int(config.hidden_size / config.num_attention_heads)

+ self.all_head_size = self.num_attention_heads * self.attention_head_size

+

+ self.query = nn.Linear(config.hidden_size, self.all_head_size)

+ self.key = nn.Linear(config.hidden_size, self.all_head_size)

+ self.value = nn.Linear(config.hidden_size, self.all_head_size)

+

+ self.dropout = nn.Dropout(config.attention_probs_dropout_prob)

+ self.position_embedding_type = position_embedding_type or getattr(

+ config, "position_embedding_type", "absolute"

+ )

+ if self.position_embedding_type == "relative_key" or self.position_embedding_type == "relative_key_query":

+ self.max_position_embeddings = config.max_position_embeddings

+ self.distance_embedding = nn.Embedding(2 * config.max_position_embeddings - 1, self.attention_head_size)

+

+ self.is_decoder = config.is_decoder

+

+ def transpose_for_scores(self, x: torch.Tensor) -> torch.Tensor:

+ new_x_shape = x.size()[:-1] + (self.num_attention_heads, self.attention_head_size)

+ x = x.view(new_x_shape)

+ return x.permute(0, 2, 1, 3)

+

+ def forward(

+ self,

+ hidden_states: torch.Tensor,

+ attention_mask: Optional[torch.FloatTensor] = None,

+ head_mask: Optional[torch.FloatTensor] = None,

+ encoder_hidden_states: Optional[torch.FloatTensor] = None,

+ encoder_attention_mask: Optional[torch.FloatTensor] = None,

+ past_key_value: Optional[Tuple[Tuple[torch.FloatTensor]]] = None,

+ output_attentions: Optional[bool] = False,

+ ) -> Tuple[torch.Tensor]:

+ mixed_query_layer = self.query(hidden_states)

+

+ # If this is instantiated as a cross-attention module, the keys

+ # and values come from an encoder; the attention mask needs to be

+ # such that the encoder's padding tokens are not attended to.

+ is_cross_attention = encoder_hidden_states is not None

+

+ if is_cross_attention and past_key_value is not None:

+ # reuse k,v, cross_attentions

+ key_layer = past_key_value[0]

+ value_layer = past_key_value[1]

+ attention_mask = encoder_attention_mask

+ elif is_cross_attention:

+ key_layer = self.transpose_for_scores(self.key(encoder_hidden_states))

+ value_layer = self.transpose_for_scores(self.value(encoder_hidden_states))

+ attention_mask = encoder_attention_mask

+ elif past_key_value is not None:

+ key_layer = self.transpose_for_scores(self.key(hidden_states))

+ value_layer = self.transpose_for_scores(self.value(hidden_states))

+ key_layer = torch.cat([past_key_value[0], key_layer], dim=2)

+ value_layer = torch.cat([past_key_value[1], value_layer], dim=2)

+ else:

+ key_layer = self.transpose_for_scores(self.key(hidden_states))

+ value_layer = self.transpose_for_scores(self.value(hidden_states))

+

+ query_layer = self.transpose_for_scores(mixed_query_layer)

+

+ if self.is_decoder:

+ # if cross_attention save Tuple(torch.Tensor, torch.Tensor) of all cross attention key/value_states.

+ # Further calls to cross_attention layer can then reuse all cross-attention

+ # key/value_states (first "if" case)

+ # if uni-directional self-attention (decoder) save Tuple(torch.Tensor, torch.Tensor) of

+ # all previous decoder key/value_states. Further calls to uni-directional self-attention

+ # can concat previous decoder key/value_states to current projected key/value_states (third "elif" case)

+ # if encoder bi-directional self-attention `past_key_value` is always `None`

+ past_key_value = (key_layer, value_layer)

+

+ # Take the dot product between "query" and "key" to get the raw attention scores.

+ attention_scores = torch.matmul(query_layer, key_layer.transpose(-1, -2))

+

+ if self.position_embedding_type == "relative_key" or self.position_embedding_type == "relative_key_query":

+ seq_length = hidden_states.size()[1]

+ position_ids_l = torch.arange(seq_length, dtype=torch.long, device=hidden_states.device).view(-1, 1)

+ position_ids_r = torch.arange(seq_length, dtype=torch.long, device=hidden_states.device).view(1, -1)

+ distance = position_ids_l - position_ids_r

+ positional_embedding = self.distance_embedding(distance + self.max_position_embeddings - 1)

+ positional_embedding = positional_embedding.to(dtype=query_layer.dtype) # fp16 compatibility

+

+ if self.position_embedding_type == "relative_key":

+ relative_position_scores = torch.einsum("bhld,lrd->bhlr", query_layer, positional_embedding)

+ attention_scores = attention_scores + relative_position_scores

+ elif self.position_embedding_type == "relative_key_query":

+ relative_position_scores_query = torch.einsum("bhld,lrd->bhlr", query_layer, positional_embedding)

+ relative_position_scores_key = torch.einsum("bhrd,lrd->bhlr", key_layer, positional_embedding)

+ attention_scores = attention_scores + relative_position_scores_query + relative_position_scores_key

+

+ attention_scores = attention_scores / math.sqrt(self.attention_head_size)

+ if attention_mask is not None:

+ # Apply the attention mask is (precomputed for all layers in MarkupLMModel forward() function)

+ attention_scores = attention_scores + attention_mask

+

+ # Normalize the attention scores to probabilities.

+ attention_probs = nn.functional.softmax(attention_scores, dim=-1)

+

+ # This is actually dropping out entire tokens to attend to, which might

+ # seem a bit unusual, but is taken from the original Transformer paper.

+ attention_probs = self.dropout(attention_probs)

+

+ # Mask heads if we want to

+ if head_mask is not None:

+ attention_probs = attention_probs * head_mask

+

+ context_layer = torch.matmul(attention_probs, value_layer)

+

+ context_layer = context_layer.permute(0, 2, 1, 3).contiguous()

+ new_context_layer_shape = context_layer.size()[:-2] + (self.all_head_size,)

+ context_layer = context_layer.view(new_context_layer_shape)

+

+ outputs = (context_layer, attention_probs) if output_attentions else (context_layer,)

+

+ if self.is_decoder: